End of training

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +6 -0

- README.md +21 -0

- checkpoint-1000/optimizer.bin +3 -0

- checkpoint-1000/pytorch_model.bin +3 -0

- checkpoint-1000/random_states_0.pkl +3 -0

- checkpoint-1000/scaler.pt +3 -0

- checkpoint-1000/scheduler.bin +3 -0

- checkpoint-1500/optimizer.bin +3 -0

- checkpoint-1500/pytorch_model.bin +3 -0

- checkpoint-1500/random_states_0.pkl +3 -0

- checkpoint-1500/scaler.pt +3 -0

- checkpoint-1500/scheduler.bin +3 -0

- checkpoint-2000/optimizer.bin +3 -0

- checkpoint-2000/pytorch_model.bin +3 -0

- checkpoint-2000/random_states_0.pkl +3 -0

- checkpoint-2000/scaler.pt +3 -0

- checkpoint-2000/scheduler.bin +3 -0

- checkpoint-2500/optimizer.bin +3 -0

- checkpoint-2500/pytorch_model.bin +3 -0

- checkpoint-2500/random_states_0.pkl +3 -0

- checkpoint-2500/scaler.pt +3 -0

- checkpoint-2500/scheduler.bin +3 -0

- checkpoint-3000/optimizer.bin +3 -0

- checkpoint-3000/pytorch_model.bin +3 -0

- checkpoint-3000/random_states_0.pkl +3 -0

- checkpoint-3000/scaler.pt +3 -0

- checkpoint-3000/scheduler.bin +3 -0

- checkpoint-500/optimizer.bin +3 -0

- checkpoint-500/pytorch_model.bin +3 -0

- checkpoint-500/random_states_0.pkl +3 -0

- checkpoint-500/scaler.pt +3 -0

- checkpoint-500/scheduler.bin +3 -0

- image_0.png +0 -0

- image_1.png +0 -0

- image_2.png +0 -0

- image_3.png +0 -0

- logs/text2image-fine-tune/1688955167.1630535/events.out.tfevents.1688955167.magneton.3115945.1 +3 -0

- logs/text2image-fine-tune/1688955167.1711032/hparams.yml +49 -0

- logs/text2image-fine-tune/1688962584.3811932/events.out.tfevents.1688962584.magneton.3120424.1 +3 -0

- logs/text2image-fine-tune/1688962584.6847372/hparams.yml +49 -0

- logs/text2image-fine-tune/events.out.tfevents.1688955166.magneton.3115945.0 +3 -0

- logs/text2image-fine-tune/events.out.tfevents.1688962584.magneton.3120424.0 +3 -0

- pytorch_lora_weights.bin +3 -0

- wandb/debug-internal.log +0 -0

- wandb/debug.log +27 -0

- wandb/run-20230708_162040-7wojc8os/files/config.yaml +39 -0

- wandb/run-20230708_162040-7wojc8os/files/output.log +29 -0

- wandb/run-20230708_162040-7wojc8os/files/requirements.txt +165 -0

- wandb/run-20230708_162040-7wojc8os/files/wandb-metadata.json +127 -0

- wandb/run-20230708_162040-7wojc8os/files/wandb-summary.json +1 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,9 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

wandb/run-20230708_164840-v06ksyvb/run-v06ksyvb.wandb filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

wandb/run-20230708_224302-qtf3vlop/run-qtf3vlop.wandb filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

wandb/run-20230710_001721-cfgbywnm/run-cfgbywnm.wandb filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

wandb/run-20230710_170714-2kdshdih/run-2kdshdih.wandb filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

wandb/run-20230710_170714-kkjwpam4/run-kkjwpam4.wandb filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

wandb/run-20230710_170714-ncgsl9n1/run-ncgsl9n1.wandb filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

---

|

| 3 |

+

license: creativeml-openrail-m

|

| 4 |

+

base_model: runwayml/stable-diffusion-v1-5

|

| 5 |

+

tags:

|

| 6 |

+

- stable-diffusion

|

| 7 |

+

- stable-diffusion-diffusers

|

| 8 |

+

- text-to-image

|

| 9 |

+

- diffusers

|

| 10 |

+

- lora

|

| 11 |

+

inference: true

|

| 12 |

+

---

|

| 13 |

+

|

| 14 |

+

# LoRA text2image fine-tuning - asrimanth/person-thumbs-up-lora

|

| 15 |

+

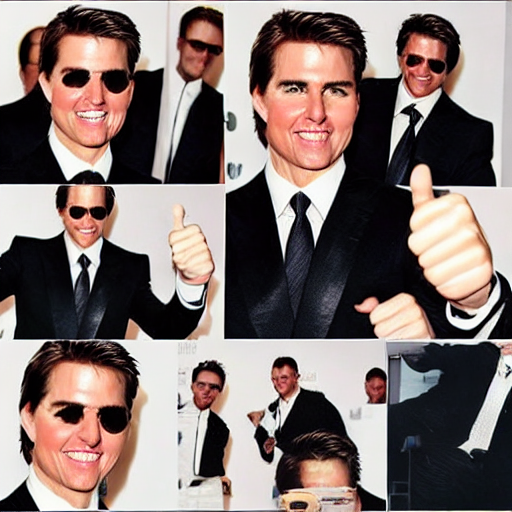

These are LoRA adaption weights for runwayml/stable-diffusion-v1-5. The weights were fine-tuned on the Custom dataset dataset. You can find some example images in the following.

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

|

checkpoint-1000/optimizer.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:acc770b81b4cd51d8d396ac49ad6237abe1de0f88b98675d99f6dd00c98d602e

|

| 3 |

+

size 6591685

|

checkpoint-1000/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:59f1fe3f7ed6197c881c1ea8c0fe33b8c17bd8c3883ea878206f8812572a85c5

|

| 3 |

+

size 3285965

|

checkpoint-1000/random_states_0.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:bf626b00f0c0a21c1ee2f5631667c9175d425a61775d2fd75a66283361ef7cec

|

| 3 |

+

size 16719

|

checkpoint-1000/scaler.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:68cff80b680ddf6e7abbef98b5f336b97f9b5963e2209307f639383870e8cc71

|

| 3 |

+

size 557

|

checkpoint-1000/scheduler.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:61808704787602dc93c00aa68d75b73415f1c6bfacfbeb97c14702c3e19cf9f2

|

| 3 |

+

size 563

|

checkpoint-1500/optimizer.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e73f085755d82ce94c7eef162ea81a6be44d9cf83bfb978566e4cbcd44fea671

|

| 3 |

+

size 6591685

|

checkpoint-1500/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6b99d4fd36435243e9f5590704bbb2bc273f02ee1b17121e2c47cb0e21f6743d

|

| 3 |

+

size 3285965

|

checkpoint-1500/random_states_0.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:863bba4edd1ca97dc90e081725c55f3be037349b333f06678cdaad3d9501ed3e

|

| 3 |

+

size 16719

|

checkpoint-1500/scaler.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:203a72d6c29f42a0e2964fdddc8d7a98df1eccee78fea9de0fa416613390f5c6

|

| 3 |

+

size 557

|

checkpoint-1500/scheduler.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e89f6844bbfca1eeee319b6e2d6f3fd9d9df58879544d455482ad32bb3ad4df6

|

| 3 |

+

size 563

|

checkpoint-2000/optimizer.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:cd34409bd46e07d33c9329f19b9e01065b2b0b573406a8be0df757bafb8848e4

|

| 3 |

+

size 6591685

|

checkpoint-2000/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:990ebd79940a1e4dd99d3b67507594172bd3fde5ea6945c1d8ebc9062ae26070

|

| 3 |

+

size 3285965

|

checkpoint-2000/random_states_0.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5fd21d1d8596fbae8788b9060a2386fe2636e634218aff7b075ff9bbd70231b3

|

| 3 |

+

size 16719

|

checkpoint-2000/scaler.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:dd2de9749828adacdf103bf6e9592702bb7067a2c1df27dd62ab38c1eb8c070f

|

| 3 |

+

size 557

|

checkpoint-2000/scheduler.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:00f6a413e75a46219e92e544cae3353a4577b60b728f62a720befd9033032acd

|

| 3 |

+

size 563

|

checkpoint-2500/optimizer.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c77079603d43b27f512ea17960b3acbf0321fa0b85e027b985677f1cdd421990

|

| 3 |

+

size 6591685

|

checkpoint-2500/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6e09ae9cce63c0ee0d6e5e98d7e1ae9ff414394d3352c45afd14f6f98b6ae6c3

|

| 3 |

+

size 3285965

|

checkpoint-2500/random_states_0.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:39f28d64d6912cc34eaaa0bb0cd0819897835a6aae2d96c1c19aa15468bb16c1

|

| 3 |

+

size 16719

|

checkpoint-2500/scaler.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0fbcebc8f5487b0c117b5dd47f2ea304af3eebf408d297118d9307e1223927e1

|

| 3 |

+

size 557

|

checkpoint-2500/scheduler.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:df00e6adcececef3aacd6addf17f6c85eae895b25ab91e673e9360b2a7f6ee86

|

| 3 |

+

size 563

|

checkpoint-3000/optimizer.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e95e8b7ea8d3aa3348d3e013cea0904c9229e2955666cb78f5a4e90bc7f6401c

|

| 3 |

+

size 6591685

|

checkpoint-3000/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a1541b4e79e7efab51d556a7179c5f74f5965962bb2aa0d29ab8c5b8837a2d02

|

| 3 |

+

size 3285965

|

checkpoint-3000/random_states_0.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d12ebe8df23ebc27557c11a245e66d96279ac2a3ff7c8b15aac109084cee9e94

|

| 3 |

+

size 16719

|

checkpoint-3000/scaler.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:fb1f9398b77268202e8e1465734a63d123b1ef11c27f20f2473677e9883a6869

|

| 3 |

+

size 557

|

checkpoint-3000/scheduler.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:807d7d063623792ef9b8c66da2304691aed0888f14ad6e70a728375b9c90fe9d

|

| 3 |

+

size 563

|

checkpoint-500/optimizer.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:88a7bc9b20ad346345b5058e353cca20ea8b834a515dc312027da289b320c6e7

|

| 3 |

+

size 6591685

|

checkpoint-500/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c44be5a37b7d6cc6ac4beba90965c20ccdd2f336aa993dc70152235db222227b

|

| 3 |

+

size 3285965

|

checkpoint-500/random_states_0.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e670e6c021cd2c9f45d7068757a1a37ed11280498d332d84fc9b52b93dce0e7d

|

| 3 |

+

size 16719

|

checkpoint-500/scaler.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a3f196a54202bb4ba1220e8c59f42f9cda0702d68ea83147d814c2fb2f36b8f2

|

| 3 |

+

size 557

|

checkpoint-500/scheduler.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6bc0a22eef87625080f7c833304b0844a5e9a28131b856c035cff1e8f8d56121

|

| 3 |

+

size 563

|

image_0.png

ADDED

|

image_1.png

ADDED

|

image_2.png

ADDED

|

image_3.png

ADDED

|

logs/text2image-fine-tune/1688955167.1630535/events.out.tfevents.1688955167.magneton.3115945.1

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b5f447f4cdd861cff146802771b78a632005903e712beccf0a6b73f15ccd5cdf

|

| 3 |

+

size 2399

|

logs/text2image-fine-tune/1688955167.1711032/hparams.yml

ADDED

|

@@ -0,0 +1,49 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

adam_beta1: 0.9

|

| 2 |

+

adam_beta2: 0.999

|

| 3 |

+

adam_epsilon: 1.0e-08

|

| 4 |

+

adam_weight_decay: 0.01

|

| 5 |

+

allow_tf32: false

|

| 6 |

+

cache_dir: /l/vision/v5/sragas/hf_models/

|

| 7 |

+

caption_column: text

|

| 8 |

+

center_crop: true

|

| 9 |

+

checkpointing_steps: 500

|

| 10 |

+

checkpoints_total_limit: null

|

| 11 |

+

dataloader_num_workers: 0

|

| 12 |

+

dataset_config_name: null

|

| 13 |

+

dataset_name: null

|

| 14 |

+

enable_xformers_memory_efficient_attention: false

|

| 15 |

+

gradient_accumulation_steps: 4

|

| 16 |

+

gradient_checkpointing: false

|

| 17 |

+

hub_model_id: sachin-thumbs-up-lora

|

| 18 |

+

hub_token: null

|

| 19 |

+

image_column: image

|

| 20 |

+

learning_rate: 0.0001

|

| 21 |

+

local_rank: 0

|

| 22 |

+

logging_dir: logs

|

| 23 |

+

lr_scheduler: cosine

|

| 24 |

+

lr_warmup_steps: 0

|

| 25 |

+

max_grad_norm: 1.0

|

| 26 |

+

max_train_samples: null

|

| 27 |

+

max_train_steps: 360

|

| 28 |

+

mixed_precision: null

|

| 29 |

+

noise_offset: 0

|

| 30 |

+

num_train_epochs: 60

|

| 31 |

+

num_validation_images: 4

|

| 32 |

+

output_dir: /l/vision/v5/sragas/easel_ai/models/

|

| 33 |

+

prediction_type: null

|

| 34 |

+

pretrained_model_name_or_path: runwayml/stable-diffusion-v1-5

|

| 35 |

+

push_to_hub: true

|

| 36 |

+

random_flip: true

|

| 37 |

+

rank: 4

|

| 38 |

+

report_to: tensorboard

|

| 39 |

+

resolution: 512

|

| 40 |

+

resume_from_checkpoint: null

|

| 41 |

+

revision: null

|

| 42 |

+

scale_lr: false

|

| 43 |

+

seed: 15

|

| 44 |

+

snr_gamma: null

|

| 45 |

+

train_batch_size: 2

|

| 46 |

+

train_data_dir: /l/vision/v5/sragas/easel_ai/thumbs_up_dataset/

|

| 47 |

+

use_8bit_adam: false

|

| 48 |

+

validation_epochs: 1

|

| 49 |

+

validation_prompt: 'A person with #thumbsup'

|

logs/text2image-fine-tune/1688962584.3811932/events.out.tfevents.1688962584.magneton.3120424.1

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ef5f855e4dcff4457f8e1694736372661f8ad463d3551cb73d9429b3c1047869

|

| 3 |

+

size 2398

|

logs/text2image-fine-tune/1688962584.6847372/hparams.yml

ADDED

|

@@ -0,0 +1,49 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

adam_beta1: 0.9

|

| 2 |

+

adam_beta2: 0.999

|

| 3 |

+

adam_epsilon: 1.0e-08

|

| 4 |

+

adam_weight_decay: 0.01

|

| 5 |

+

allow_tf32: false

|

| 6 |

+

cache_dir: /l/vision/v5/sragas/hf_models/

|

| 7 |

+

caption_column: text

|

| 8 |

+

center_crop: true

|

| 9 |

+

checkpointing_steps: 500

|

| 10 |

+

checkpoints_total_limit: null

|

| 11 |

+

dataloader_num_workers: 0

|

| 12 |

+

dataset_config_name: null

|

| 13 |

+

dataset_name: null

|

| 14 |

+

enable_xformers_memory_efficient_attention: false

|

| 15 |

+

gradient_accumulation_steps: 4

|

| 16 |

+

gradient_checkpointing: false

|

| 17 |

+

hub_model_id: sachin-thumbs-up-lora

|

| 18 |

+

hub_token: null

|

| 19 |

+

image_column: image

|

| 20 |

+

learning_rate: 0.0001

|

| 21 |

+

local_rank: 0

|

| 22 |

+

logging_dir: logs

|

| 23 |

+

lr_scheduler: cosine

|

| 24 |

+

lr_warmup_steps: 0

|

| 25 |

+

max_grad_norm: 1.0

|

| 26 |

+

max_train_samples: null

|

| 27 |

+

max_train_steps: 700

|

| 28 |

+

mixed_precision: null

|

| 29 |

+

noise_offset: 0

|

| 30 |

+

num_train_epochs: 100

|

| 31 |

+

num_validation_images: 4

|

| 32 |

+

output_dir: /l/vision/v5/sragas/easel_ai/models/

|

| 33 |

+

prediction_type: null

|

| 34 |

+

pretrained_model_name_or_path: runwayml/stable-diffusion-v1-5

|

| 35 |

+

push_to_hub: true

|

| 36 |

+

random_flip: true

|

| 37 |

+

rank: 4

|

| 38 |

+

report_to: tensorboard

|

| 39 |

+

resolution: 512

|

| 40 |

+

resume_from_checkpoint: null

|

| 41 |

+

revision: null

|

| 42 |

+

scale_lr: false

|

| 43 |

+

seed: 15

|

| 44 |

+

snr_gamma: null

|

| 45 |

+

train_batch_size: 3

|

| 46 |

+

train_data_dir: /l/vision/v5/sragas/easel_ai/thumbs_up_dataset/

|

| 47 |

+

use_8bit_adam: false

|

| 48 |

+

validation_epochs: 1

|

| 49 |

+

validation_prompt: '<tom_cruise> #thumbsup'

|

logs/text2image-fine-tune/events.out.tfevents.1688955166.magneton.3115945.0

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:98e366dd41155488121dc6e6b816f564dd5abb7d3b97e7b23c6f5521972aff09

|

| 3 |

+

size 88

|

logs/text2image-fine-tune/events.out.tfevents.1688962584.magneton.3120424.0

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:91956d6612c16c0d39d3feb1915fdd2a9fc6c1093a4acf6828307527b152dfea

|

| 3 |

+

size 88

|

pytorch_lora_weights.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1613e7cca2fa0fc15cc06a4dcdcbfb1568c9a80c32aad18dfe283b7f9c5ea2ca

|

| 3 |

+

size 3287771

|

wandb/debug-internal.log

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

wandb/debug.log

ADDED

|

@@ -0,0 +1,27 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

2023-07-10 17:07:15,048 INFO MainThread:3133504 [wandb_setup.py:_flush():76] Current SDK version is 0.15.4

|

| 2 |

+

2023-07-10 17:07:15,048 INFO MainThread:3133504 [wandb_setup.py:_flush():76] Configure stats pid to 3133504

|

| 3 |

+

2023-07-10 17:07:15,049 INFO MainThread:3133504 [wandb_setup.py:_flush():76] Loading settings from /u/sragas/.config/wandb/settings

|

| 4 |

+

2023-07-10 17:07:15,049 INFO MainThread:3133504 [wandb_setup.py:_flush():76] Loading settings from /nfs/nfs2/home/sragas/demo/wandb/settings

|

| 5 |

+

2023-07-10 17:07:15,049 INFO MainThread:3133504 [wandb_setup.py:_flush():76] Loading settings from environment variables: {}

|

| 6 |

+

2023-07-10 17:07:15,049 INFO MainThread:3133504 [wandb_setup.py:_flush():76] Applying setup settings: {'_disable_service': False}

|

| 7 |

+

2023-07-10 17:07:15,049 INFO MainThread:3133504 [wandb_setup.py:_flush():76] Inferring run settings from compute environment: {'program_relpath': 'train_text_to_image_lora.py', 'program': 'train_text_to_image_lora.py'}

|

| 8 |

+

2023-07-10 17:07:15,049 INFO MainThread:3133504 [wandb_init.py:_log_setup():507] Logging user logs to /l/vision/v5/sragas/easel_ai/models/wandb/run-20230710_170714-2kdshdih/logs/debug.log

|

| 9 |

+

2023-07-10 17:07:15,049 INFO MainThread:3133504 [wandb_init.py:_log_setup():508] Logging internal logs to /l/vision/v5/sragas/easel_ai/models/wandb/run-20230710_170714-2kdshdih/logs/debug-internal.log

|

| 10 |

+

2023-07-10 17:07:15,049 INFO MainThread:3133504 [wandb_init.py:init():547] calling init triggers

|

| 11 |

+

2023-07-10 17:07:15,049 INFO MainThread:3133504 [wandb_init.py:init():554] wandb.init called with sweep_config: {}

|

| 12 |

+

config: {}

|

| 13 |

+

2023-07-10 17:07:15,050 INFO MainThread:3133504 [wandb_init.py:init():596] starting backend

|

| 14 |

+

2023-07-10 17:07:15,050 INFO MainThread:3133504 [wandb_init.py:init():600] setting up manager

|

| 15 |

+

2023-07-10 17:07:15,053 INFO MainThread:3133504 [backend.py:_multiprocessing_setup():106] multiprocessing start_methods=fork,spawn,forkserver, using: spawn

|

| 16 |

+

2023-07-10 17:07:15,056 INFO MainThread:3133504 [wandb_init.py:init():606] backend started and connected

|

| 17 |

+

2023-07-10 17:07:15,063 INFO MainThread:3133504 [wandb_init.py:init():703] updated telemetry

|

| 18 |

+

2023-07-10 17:07:15,064 INFO MainThread:3133504 [wandb_init.py:init():736] communicating run to backend with 60.0 second timeout

|

| 19 |

+

2023-07-10 17:07:15,246 INFO MainThread:3133504 [wandb_run.py:_on_init():2176] communicating current version

|

| 20 |

+

2023-07-10 17:07:15,317 INFO MainThread:3133504 [wandb_run.py:_on_init():2185] got version response upgrade_message: "wandb version 0.15.5 is available! To upgrade, please run:\n $ pip install wandb --upgrade"

|

| 21 |

+

|

| 22 |

+

2023-07-10 17:07:15,317 INFO MainThread:3133504 [wandb_init.py:init():787] starting run threads in backend

|

| 23 |

+

2023-07-10 17:07:15,502 INFO MainThread:3133504 [wandb_run.py:_console_start():2155] atexit reg

|

| 24 |

+

2023-07-10 17:07:15,503 INFO MainThread:3133504 [wandb_run.py:_redirect():2010] redirect: SettingsConsole.WRAP_RAW

|

| 25 |

+

2023-07-10 17:07:15,503 INFO MainThread:3133504 [wandb_run.py:_redirect():2075] Wrapping output streams.

|

| 26 |

+

2023-07-10 17:07:15,503 INFO MainThread:3133504 [wandb_run.py:_redirect():2100] Redirects installed.

|

| 27 |

+

2023-07-10 17:07:15,504 INFO MainThread:3133504 [wandb_init.py:init():828] run started, returning control to user process

|

wandb/run-20230708_162040-7wojc8os/files/config.yaml

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

wandb_version: 1

|

| 2 |

+

|

| 3 |

+

_wandb:

|

| 4 |

+

desc: null

|

| 5 |

+

value:

|

| 6 |

+

python_version: 3.8.10

|

| 7 |

+

cli_version: 0.15.4

|

| 8 |

+

framework: huggingface

|

| 9 |

+

huggingface_version: 4.30.2

|

| 10 |

+

is_jupyter_run: false

|

| 11 |

+

is_kaggle_kernel: true

|

| 12 |

+

start_time: 1688847640.60948

|

| 13 |

+

t:

|

| 14 |

+

1:

|

| 15 |

+

- 1

|

| 16 |

+

- 11

|

| 17 |

+

- 41

|

| 18 |

+

- 49

|

| 19 |

+

- 51

|

| 20 |

+

- 55

|

| 21 |

+

- 71

|

| 22 |

+

- 83

|

| 23 |

+

2:

|

| 24 |

+

- 1

|

| 25 |

+

- 11

|

| 26 |

+

- 41

|

| 27 |

+

- 49

|

| 28 |

+

- 51

|

| 29 |

+

- 55

|

| 30 |

+

- 71

|

| 31 |

+

- 83

|

| 32 |

+

3:

|

| 33 |

+

- 23

|

| 34 |

+

4: 3.8.10

|

| 35 |

+

5: 0.15.4

|

| 36 |

+

6: 4.30.2

|

| 37 |

+

8:

|

| 38 |

+

- 2

|

| 39 |

+

- 5

|

wandb/run-20230708_162040-7wojc8os/files/output.log

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

07/08/2023 16:20:41 - INFO - __main__ - Distributed environment: MULTI_GPU Backend: nccl

|

| 2 |

+

Num processes: 3

|

| 3 |

+

Process index: 1

|

| 4 |

+

Local process index: 1

|

| 5 |

+

Device: cuda:1

|

| 6 |

+

Mixed precision type: fp16

|

| 7 |

+

Traceback (most recent call last):

|

| 8 |

+

File "/nfs/blitzle/home/data/vision5/sragas/easel_venv/lib/python3.8/site-packages/diffusers/utils/hub_utils.py", line 311, in _get_model_file

|

| 9 |

+

model_file = hf_hub_download(

|

| 10 |

+

File "/nfs/blitzle/home/data/vision5/sragas/easel_venv/lib/python3.8/site-packages/huggingface_hub/utils/_validators.py", line 118, in _inner_fn

|

| 11 |

+

return fn(*args, **kwargs)

|

| 12 |

+

File "/nfs/blitzle/home/data/vision5/sragas/easel_venv/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 1361, in hf_hub_download

|

| 13 |

+

with temp_file_manager() as temp_file:

|

| 14 |

+

File "/usr/lib/python3.8/tempfile.py", line 679, in NamedTemporaryFile

|

| 15 |

+

(fd, name) = _mkstemp_inner(dir, prefix, suffix, flags, output_type)

|

| 16 |

+

File "/usr/lib/python3.8/tempfile.py", line 389, in _mkstemp_inner

|

| 17 |

+

fd = _os.open(file, flags, 0o600)

|

| 18 |

+

OSError: [Errno 122] Disk quota exceeded: '/u/sragas/.cache/huggingface/hub/tmpltn5e7d6'

|

| 19 |

+

During handling of the above exception, another exception occurred:

|

| 20 |

+

Traceback (most recent call last):

|

| 21 |

+

File "train_text_to_image_lora.py", line 951, in <module>

|

| 22 |

+

main()

|

| 23 |

+

File "train_text_to_image_lora.py", line 426, in main

|

| 24 |

+

unet = UNet2DConditionModel.from_pretrained(

|

| 25 |

+

File "/nfs/blitzle/home/data/vision5/sragas/easel_venv/lib/python3.8/site-packages/diffusers/models/modeling_utils.py", line 576, in from_pretrained

|

| 26 |

+

model_file = _get_model_file(

|

| 27 |

+

File "/nfs/blitzle/home/data/vision5/sragas/easel_venv/lib/python3.8/site-packages/diffusers/utils/hub_utils.py", line 356, in _get_model_file

|

| 28 |

+

raise EnvironmentError(

|

| 29 |

+

OSError: Can't load the model for 'runwayml/stable-diffusion-v1-5'. If you were trying to load it from 'https://huggingface.co/models', make sure you don't have a local directory with the same name. Otherwise, make sure 'runwayml/stable-diffusion-v1-5' is the correct path to a directory containing a file named diffusion_pytorch_model.bin

|

wandb/run-20230708_162040-7wojc8os/files/requirements.txt

ADDED

|

@@ -0,0 +1,165 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

absl-py==1.4.0

|

| 2 |

+

accelerate==0.20.3

|

| 3 |

+

aiohttp==3.8.4

|

| 4 |

+

aiosignal==1.3.1

|

| 5 |

+

anyio==3.7.0

|

| 6 |

+

appdirs==1.4.4

|

| 7 |

+

argon2-cffi-bindings==21.2.0

|

| 8 |

+

argon2-cffi==21.3.0

|

| 9 |

+

asttokens==2.2.1

|

| 10 |

+

async-lru==2.0.2

|

| 11 |

+

async-timeout==4.0.2

|

| 12 |

+

attrs==23.1.0

|

| 13 |

+

babel==2.12.1

|

| 14 |

+

backcall==0.2.0

|

| 15 |

+

beautifulsoup4==4.12.2

|

| 16 |

+

bleach==6.0.0

|

| 17 |

+

cachetools==5.3.1

|

| 18 |

+

certifi==2023.5.7

|

| 19 |

+

cffi==1.15.1

|

| 20 |

+

charset-normalizer==3.1.0

|

| 21 |

+

click==8.1.3

|

| 22 |

+

cmake==3.26.4

|

| 23 |

+

comm==0.1.3

|

| 24 |

+

datasets==2.13.1

|

| 25 |

+

debugpy==1.6.7

|

| 26 |

+

decorator==5.1.1

|

| 27 |

+

defusedxml==0.7.1

|

| 28 |

+

diffusers==0.18.0.dev0

|

| 29 |

+

dill==0.3.6

|

| 30 |

+

docker-pycreds==0.4.0

|

| 31 |

+

exceptiongroup==1.1.2

|

| 32 |

+

executing==1.2.0

|

| 33 |

+

fastjsonschema==2.17.1

|

| 34 |

+

filelock==3.12.2

|

| 35 |

+

frozenlist==1.3.3

|

| 36 |

+

fsspec==2023.6.0

|

| 37 |

+

ftfy==6.1.1

|

| 38 |

+

gitdb==4.0.10

|

| 39 |

+

gitpython==3.1.31

|

| 40 |

+

google-auth-oauthlib==1.0.0

|

| 41 |

+

google-auth==2.21.0

|

| 42 |

+

grpcio==1.56.0

|

| 43 |

+

huggingface-hub==0.15.1

|

| 44 |

+

idna==3.4

|

| 45 |

+

importlib-metadata==6.7.0

|

| 46 |

+

importlib-resources==5.12.0

|

| 47 |

+

ipykernel==6.24.0

|

| 48 |

+

ipython==8.12.2

|

| 49 |

+

jedi==0.18.2

|

| 50 |

+

jinja2==3.1.2

|

| 51 |

+

json5==0.9.14

|

| 52 |

+

jsonschema==4.17.3

|

| 53 |

+

jupyter-client==8.3.0

|

| 54 |

+

jupyter-core==5.3.1

|

| 55 |

+

jupyter-events==0.6.3

|

| 56 |

+

jupyter-lsp==2.2.0

|

| 57 |

+

jupyter-server-terminals==0.4.4

|

| 58 |

+

jupyter-server==2.7.0

|

| 59 |

+

jupyterlab-pygments==0.2.2

|

| 60 |

+

jupyterlab-server==2.23.0

|

| 61 |

+

jupyterlab==4.0.2

|

| 62 |

+

lit==16.0.6

|

| 63 |

+

markdown==3.4.3

|

| 64 |

+

markupsafe==2.1.3

|

| 65 |

+

matplotlib-inline==0.1.6

|

| 66 |

+

mistune==3.0.1

|

| 67 |

+

mpmath==1.3.0

|

| 68 |

+

multidict==6.0.4

|

| 69 |

+

multiprocess==0.70.14

|

| 70 |

+

mypy-extensions==1.0.0

|

| 71 |

+

nbclient==0.8.0

|

| 72 |

+

nbconvert==7.6.0

|

| 73 |

+

nbformat==5.9.0

|

| 74 |

+

nest-asyncio==1.5.6

|

| 75 |

+

networkx==3.1

|

| 76 |

+

notebook-shim==0.2.3

|

| 77 |

+

numpy==1.24.4

|

| 78 |

+

nvidia-cublas-cu11==11.10.3.66

|

| 79 |

+

nvidia-cuda-cupti-cu11==11.7.101

|

| 80 |

+

nvidia-cuda-nvrtc-cu11==11.7.99

|

| 81 |

+

nvidia-cuda-runtime-cu11==11.7.99

|

| 82 |

+

nvidia-cudnn-cu11==8.5.0.96

|

| 83 |

+

nvidia-cufft-cu11==10.9.0.58

|

| 84 |

+

nvidia-curand-cu11==10.2.10.91

|

| 85 |

+

nvidia-cusolver-cu11==11.4.0.1

|

| 86 |

+

nvidia-cusparse-cu11==11.7.4.91

|

| 87 |

+

nvidia-nccl-cu11==2.14.3

|

| 88 |

+

nvidia-nvtx-cu11==11.7.91

|

| 89 |

+

oauthlib==3.2.2

|

| 90 |

+

overrides==7.3.1

|

| 91 |

+

packaging==23.1

|

| 92 |

+

pandas==2.0.3

|

| 93 |

+

pandocfilters==1.5.0

|

| 94 |

+

parso==0.8.3

|

| 95 |

+

pathtools==0.1.2

|

| 96 |

+

pexpect==4.8.0

|

| 97 |

+

pickleshare==0.7.5

|

| 98 |

+

pillow==10.0.0

|

| 99 |

+

pip==20.0.2

|

| 100 |

+

pkg-resources==0.0.0

|

| 101 |

+

pkgutil-resolve-name==1.3.10

|

| 102 |

+

platformdirs==3.8.0

|

| 103 |

+

prometheus-client==0.17.0

|

| 104 |

+

prompt-toolkit==3.0.39

|

| 105 |

+

protobuf==4.23.3

|

| 106 |

+

psutil==5.9.5

|

| 107 |

+

ptyprocess==0.7.0

|

| 108 |

+

pure-eval==0.2.2

|

| 109 |

+

pyarrow==12.0.1

|

| 110 |

+

pyasn1-modules==0.3.0

|

| 111 |

+

pyasn1==0.5.0

|

| 112 |

+

pycparser==2.21

|

| 113 |

+

pygments==2.15.1

|

| 114 |

+

pyre-extensions==0.0.29

|

| 115 |

+

pyrsistent==0.19.3

|

| 116 |

+

python-dateutil==2.8.2

|

| 117 |

+

python-json-logger==2.0.7

|

| 118 |

+

pytz==2023.3

|

| 119 |

+

pyyaml==6.0

|

| 120 |

+

pyzmq==25.1.0

|

| 121 |

+

regex==2023.6.3

|

| 122 |

+

requests-oauthlib==1.3.1

|

| 123 |

+

requests==2.31.0

|

| 124 |

+

rfc3339-validator==0.1.4

|

| 125 |

+

rfc3986-validator==0.1.1

|

| 126 |

+

rsa==4.9

|

| 127 |

+

safetensors==0.3.1

|

| 128 |

+

send2trash==1.8.2

|

| 129 |

+

sentry-sdk==1.27.0

|

| 130 |

+

setproctitle==1.3.2

|

| 131 |

+

setuptools==44.0.0

|

| 132 |

+

six==1.16.0

|

| 133 |

+

smmap==5.0.0

|

| 134 |

+

sniffio==1.3.0

|

| 135 |

+

soupsieve==2.4.1

|

| 136 |

+

stack-data==0.6.2

|

| 137 |

+

sympy==1.12

|

| 138 |

+

tensorboard-data-server==0.7.1

|

| 139 |

+

tensorboard==2.13.0

|

| 140 |

+

terminado==0.17.1

|

| 141 |

+

tinycss2==1.2.1

|

| 142 |

+

tokenizers==0.13.3

|

| 143 |

+

tomli==2.0.1

|

| 144 |

+

torch==2.0.1

|

| 145 |

+

torchaudio==2.0.2

|

| 146 |

+

torchvision==0.15.2

|

| 147 |

+

tornado==6.3.2

|

| 148 |

+

tqdm==4.65.0

|

| 149 |

+

traitlets==5.9.0

|

| 150 |

+

transformers==4.30.2

|

| 151 |

+

triton==2.0.0

|

| 152 |

+

typing-extensions==4.7.1

|

| 153 |

+

typing-inspect==0.9.0

|

| 154 |

+

tzdata==2023.3

|

| 155 |

+

urllib3==2.0.3

|

| 156 |

+

wandb==0.15.4

|

| 157 |

+

wcwidth==0.2.6

|

| 158 |

+

webencodings==0.5.1

|

| 159 |

+

websocket-client==1.6.1

|

| 160 |

+

werkzeug==2.3.6

|

| 161 |

+

wheel==0.40.0

|

| 162 |

+

xformers==0.0.20

|

| 163 |

+

xxhash==3.2.0

|

| 164 |

+

yarl==1.9.2

|

| 165 |

+

zipp==3.15.0

|

wandb/run-20230708_162040-7wojc8os/files/wandb-metadata.json

ADDED

|

@@ -0,0 +1,127 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"os": "Linux-5.15.0-60-generic-x86_64-with-glibc2.29",

|

| 3 |

+

"python": "3.8.10",

|

| 4 |

+

"heartbeatAt": "2023-07-08T20:20:41.026887",

|

| 5 |

+

"startedAt": "2023-07-08T20:20:40.579314",

|

| 6 |

+

"docker": null,

|

| 7 |

+

"cuda": null,

|

| 8 |

+

"args": [

|

| 9 |

+

"--pretrained_model_name_or_path=runwayml/stable-diffusion-v1-5",

|

| 10 |

+

"--train_data_dir=/l/vision/v5/sragas/easel_ai/thumbs_up_dataset/",

|

| 11 |

+

"--resolution=512",

|

| 12 |

+

"--center_crop",

|

| 13 |

+

"--random_flip",

|

| 14 |

+

"--train_batch_size=1",

|

| 15 |

+

"--gradient_accumulation_steps=4",

|

| 16 |

+

"--max_train_steps=15000",

|

| 17 |

+

"--learning_rate=1e-04",

|

| 18 |

+

"--max_grad_norm=1",

|

| 19 |

+

"--lr_scheduler=cosine",

|

| 20 |

+

"--lr_warmup_steps=0",

|

| 21 |

+

"--output_dir=/l/vision/v5/sragas/easel_ai/models/",

|

| 22 |

+

"--push_to_hub",

|

| 23 |

+

"--hub_model_id=thumbs-up-lora",

|

| 24 |

+

"--report_to=wandb",

|

| 25 |

+

"--checkpointing_steps=500",

|

| 26 |

+

"--validation_prompt=A person with #thumbsup",

|

| 27 |

+

"--seed=1337"

|

| 28 |

+

],

|

| 29 |

+

"state": "running",

|

| 30 |

+

"program": "train_text_to_image_lora.py",

|

| 31 |

+

"codePath": "train_text_to_image_lora.py",

|

| 32 |

+

"host": "magneton",

|

| 33 |

+

"username": "sragas",

|

| 34 |

+

"executable": "/nfs/blitzle/home/data/vision5/sragas/easel_venv/bin/python3",

|

| 35 |

+

"cpu_count": 6,

|

| 36 |

+

"cpu_count_logical": 12,

|

| 37 |

+

"cpu_freq": {

|

| 38 |

+

"current": 1897.8334166666666,

|

| 39 |

+

"min": 1200.0,

|

| 40 |

+

"max": 3700.0

|

| 41 |

+

},

|

| 42 |

+

"cpu_freq_per_core": [

|

| 43 |

+

{

|

| 44 |

+

"current": 1200.0,

|

| 45 |

+

"min": 1200.0,

|

| 46 |

+

"max": 3700.0

|

| 47 |

+

},

|

| 48 |

+

{

|

| 49 |

+

"current": 1456.076,

|

| 50 |

+

"min": 1200.0,

|

| 51 |

+

"max": 3700.0

|

| 52 |

+

},

|

| 53 |

+

{

|

| 54 |

+

"current": 1300.0,

|

| 55 |

+

"min": 1200.0,

|

| 56 |

+

"max": 3700.0

|

| 57 |

+

},

|

| 58 |

+

{

|

| 59 |

+

"current": 2496.8,

|

| 60 |

+

"min": 1200.0,

|

| 61 |

+

"max": 3700.0

|

| 62 |

+

},

|

| 63 |

+

{

|

| 64 |

+

"current": 2981.104,

|

| 65 |

+

"min": 1200.0,

|

| 66 |

+

"max": 3700.0

|

| 67 |

+

},

|

| 68 |

+

{

|

| 69 |

+

"current": 1200.0,

|

| 70 |

+

"min": 1200.0,

|

| 71 |

+

"max": 3700.0

|

| 72 |

+

},

|

| 73 |

+

{

|

| 74 |

+

"current": 1200.0,

|

| 75 |

+

"min": 1200.0,

|

| 76 |

+

"max": 3700.0

|

| 77 |

+

},

|

| 78 |

+

{

|

| 79 |

+

"current": 1300.0,

|

| 80 |

+

"min": 1200.0,

|

| 81 |

+

"max": 3700.0

|

| 82 |

+

},

|

| 83 |

+

{

|

| 84 |

+

"current": 2200.0,

|

| 85 |

+

"min": 1200.0,

|

| 86 |

+

"max": 3700.0

|

| 87 |

+

},

|

| 88 |

+

{

|

| 89 |

+

"current": 1300.0,

|

| 90 |

+

"min": 1200.0,

|

| 91 |

+

"max": 3700.0

|

| 92 |

+

},

|

| 93 |

+

{

|

| 94 |

+

"current": 1200.0,

|

| 95 |

+

"min": 1200.0,

|

| 96 |

+

"max": 3700.0

|

| 97 |

+

},

|

| 98 |

+

{

|

| 99 |

+

"current": 2435.006,

|

| 100 |

+

"min": 1200.0,

|

| 101 |

+

"max": 3700.0

|

| 102 |

+

}

|

| 103 |

+

],

|

| 104 |

+

"disk": {

|

| 105 |

+

"total": 467.8895797729492,

|

| 106 |

+

"used": 42.67890548706055

|

| 107 |

+

},

|

| 108 |

+

"gpu": "NVIDIA GeForce GTX TITAN X",

|

| 109 |

+

"gpu_count": 3,

|

| 110 |

+

"gpu_devices": [

|

| 111 |

+

{

|

| 112 |

+

"name": "NVIDIA GeForce GTX TITAN X",

|

| 113 |

+

"memory_total": 12884901888

|

| 114 |

+

},

|

| 115 |

+

{

|

| 116 |

+

"name": "NVIDIA TITAN X (Pascal)",

|

| 117 |

+

"memory_total": 12884901888

|

| 118 |

+

},

|

| 119 |

+

{

|

| 120 |

+

"name": "NVIDIA GeForce GTX TITAN X",

|

| 121 |

+

"memory_total": 12884901888

|

| 122 |

+

}

|

| 123 |

+

],

|

| 124 |

+

"memory": {

|

| 125 |

+

"total": 31.240768432617188

|

| 126 |

+

}

|

| 127 |

+

}

|

wandb/run-20230708_162040-7wojc8os/files/wandb-summary.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"_wandb": {"runtime": 53}}

|