Update README.md

Browse files

README.md

CHANGED

|

@@ -1,6 +1,11 @@

|

|

| 1 |

---

|

| 2 |

library_name: transformers

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 4 |

---

|

| 5 |

|

| 6 |

# Model Card for Model ID

|

|

@@ -17,183 +22,140 @@ tags: []

|

|

| 17 |

|

| 18 |

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

|

| 19 |

|

| 20 |

-

- **Developed by:** [

|

| 21 |

-

- **

|

| 22 |

-

- **

|

| 23 |

-

- **Model type:** [More Information Needed]

|

| 24 |

-

- **Language(s) (NLP):** [More Information Needed]

|

| 25 |

-

- **License:** [More Information Needed]

|

| 26 |

-

- **Finetuned from model [optional]:** [More Information Needed]

|

| 27 |

|

| 28 |

### Model Sources [optional]

|

| 29 |

|

| 30 |

<!-- Provide the basic links for the model. -->

|

| 31 |

|

| 32 |

-

- **Repository:** [

|

| 33 |

-

- **

|

| 34 |

-

- **Demo [optional]:** [More Information Needed]

|

| 35 |

|

| 36 |

-

|

| 37 |

|

| 38 |

-

|

| 39 |

|

| 40 |

-

### Direct Use

|

| 41 |

|

| 42 |

-

|

| 43 |

|

| 44 |

-

|

| 45 |

|

| 46 |

-

|

| 47 |

|

| 48 |

-

|

| 49 |

|

| 50 |

-

[

|

| 51 |

|

| 52 |

-

|

| 53 |

|

| 54 |

-

|

| 55 |

|

| 56 |

-

|

| 57 |

|

| 58 |

-

|

| 59 |

|

| 60 |

-

|

| 61 |

|

| 62 |

-

|

| 63 |

|

| 64 |

-

|

|

|

|

|

|

|

|

|

|

| 65 |

|

| 66 |

-

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

|

| 67 |

|

| 68 |

-

|

|

|

|

| 69 |

|

| 70 |

-

|

| 71 |

|

| 72 |

-

|

| 73 |

|

| 74 |

-

|

| 75 |

|

| 76 |

-

|

| 77 |

|

| 78 |

-

|

| 79 |

|

| 80 |

-

|

| 81 |

|

| 82 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 83 |

|

| 84 |

-

|

| 85 |

|

| 86 |

-

|

|

|

|

| 87 |

|

| 88 |

-

|

| 89 |

|

| 90 |

-

[More Information Needed]

|

| 91 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 92 |

|

| 93 |

-

|

|

|

|

| 94 |

|

| 95 |

-

|

|

|

|

| 96 |

|

| 97 |

-

|

| 98 |

|

| 99 |

-

|

|

|

|

| 100 |

|

| 101 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 102 |

|

| 103 |

-

|

|

|

|

|

|

|

|

|

|

| 104 |

|

| 105 |

-

|

|

|

|

|

|

|

| 106 |

|

| 107 |

-

|

|

|

|

|

|

|

| 108 |

|

| 109 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 110 |

|

| 111 |

-

|

| 112 |

|

| 113 |

-

[More Information Needed]

|

| 114 |

-

|

| 115 |

-

#### Factors

|

| 116 |

-

|

| 117 |

-

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

|

| 118 |

-

|

| 119 |

-

[More Information Needed]

|

| 120 |

-

|

| 121 |

-

#### Metrics

|

| 122 |

-

|

| 123 |

-

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

|

| 124 |

-

|

| 125 |

-

[More Information Needed]

|

| 126 |

-

|

| 127 |

-

### Results

|

| 128 |

-

|

| 129 |

-

[More Information Needed]

|

| 130 |

-

|

| 131 |

-

#### Summary

|

| 132 |

-

|

| 133 |

-

|

| 134 |

-

|

| 135 |

-

## Model Examination [optional]

|

| 136 |

-

|

| 137 |

-

<!-- Relevant interpretability work for the model goes here -->

|

| 138 |

-

|

| 139 |

-

[More Information Needed]

|

| 140 |

-

|

| 141 |

-

## Environmental Impact

|

| 142 |

-

|

| 143 |

-

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

|

| 144 |

-

|

| 145 |

-

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

|

| 146 |

-

|

| 147 |

-

- **Hardware Type:** [More Information Needed]

|

| 148 |

-

- **Hours used:** [More Information Needed]

|

| 149 |

-

- **Cloud Provider:** [More Information Needed]

|

| 150 |

-

- **Compute Region:** [More Information Needed]

|

| 151 |

-

- **Carbon Emitted:** [More Information Needed]

|

| 152 |

-

|

| 153 |

-

## Technical Specifications [optional]

|

| 154 |

-

|

| 155 |

-

### Model Architecture and Objective

|

| 156 |

-

|

| 157 |

-

[More Information Needed]

|

| 158 |

-

|

| 159 |

-

### Compute Infrastructure

|

| 160 |

-

|

| 161 |

-

[More Information Needed]

|

| 162 |

-

|

| 163 |

-

#### Hardware

|

| 164 |

-

|

| 165 |

-

[More Information Needed]

|

| 166 |

-

|

| 167 |

-

#### Software

|

| 168 |

-

|

| 169 |

-

[More Information Needed]

|

| 170 |

-

|

| 171 |

-

## Citation [optional]

|

| 172 |

-

|

| 173 |

-

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

|

| 174 |

-

|

| 175 |

-

**BibTeX:**

|

| 176 |

-

|

| 177 |

-

[More Information Needed]

|

| 178 |

-

|

| 179 |

-

**APA:**

|

| 180 |

-

|

| 181 |

-

[More Information Needed]

|

| 182 |

-

|

| 183 |

-

## Glossary [optional]

|

| 184 |

-

|

| 185 |

-

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

|

| 186 |

-

|

| 187 |

-

[More Information Needed]

|

| 188 |

-

|

| 189 |

-

## More Information [optional]

|

| 190 |

-

|

| 191 |

-

[More Information Needed]

|

| 192 |

-

|

| 193 |

-

## Model Card Authors [optional]

|

| 194 |

-

|

| 195 |

-

[More Information Needed]

|

| 196 |

-

|

| 197 |

-

## Model Card Contact

|

| 198 |

-

|

| 199 |

-

[More Information Needed]

|

|

|

|

| 1 |

---

|

| 2 |

library_name: transformers

|

| 3 |

+

license: apache-2.0

|

| 4 |

+

language:

|

| 5 |

+

- en

|

| 6 |

+

metrics:

|

| 7 |

+

- accuracy

|

| 8 |

+

pipeline_tag: image-classification

|

| 9 |

---

|

| 10 |

|

| 11 |

# Model Card for Model ID

|

|

|

|

| 22 |

|

| 23 |

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

|

| 24 |

|

| 25 |

+

- **Developed by:** [Mohammed Vasim](https://huggingface.co/md-vasim)

|

| 26 |

+

- **Model type:** Vision Transformer For Image Classification

|

| 27 |

+

- **Finetuned from model [optional]:** [https://huggingface.co/google/vit-base-patch16-224](https://huggingface.co/google/vit-base-patch16-224)

|

|

|

|

|

|

|

|

|

|

|

|

|

| 28 |

|

| 29 |

### Model Sources [optional]

|

| 30 |

|

| 31 |

<!-- Provide the basic links for the model. -->

|

| 32 |

|

| 33 |

+

- **Repository:** [https://huggingface.co/md-vasim/vit-base-patch16-224-finetuned-lora-oxford-pets](https://huggingface.co/md-vasim/vit-base-patch16-224-finetuned-lora-oxford-pets)

|

| 34 |

+

- **Demo [optional]:** [https://huggingface.co/spaces/md-vasim](https://huggingface.co/spaces/md-vasim)

|

|

|

|

| 35 |

|

| 36 |

+

# Vision Transformer (base-sized model)

|

| 37 |

|

| 38 |

+

Vision Transformer (ViT) model pre-trained on ImageNet-21k (14 million images, 21,843 classes) at resolution 224x224, and fine-tuned on ImageNet 2012 (1 million images, 1,000 classes) at resolution 224x224. It was introduced in the paper [An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale](https://arxiv.org/abs/2010.11929) by Dosovitskiy et al. and first released in [this repository](https://github.com/google-research/vision_transformer). However, the weights were converted from the [timm repository](https://github.com/rwightman/pytorch-image-models) by Ross Wightman, who already converted the weights from JAX to PyTorch. Credits go to him.

|

| 39 |

|

|

|

|

| 40 |

|

| 41 |

+

## Model description

|

| 42 |

|

| 43 |

+

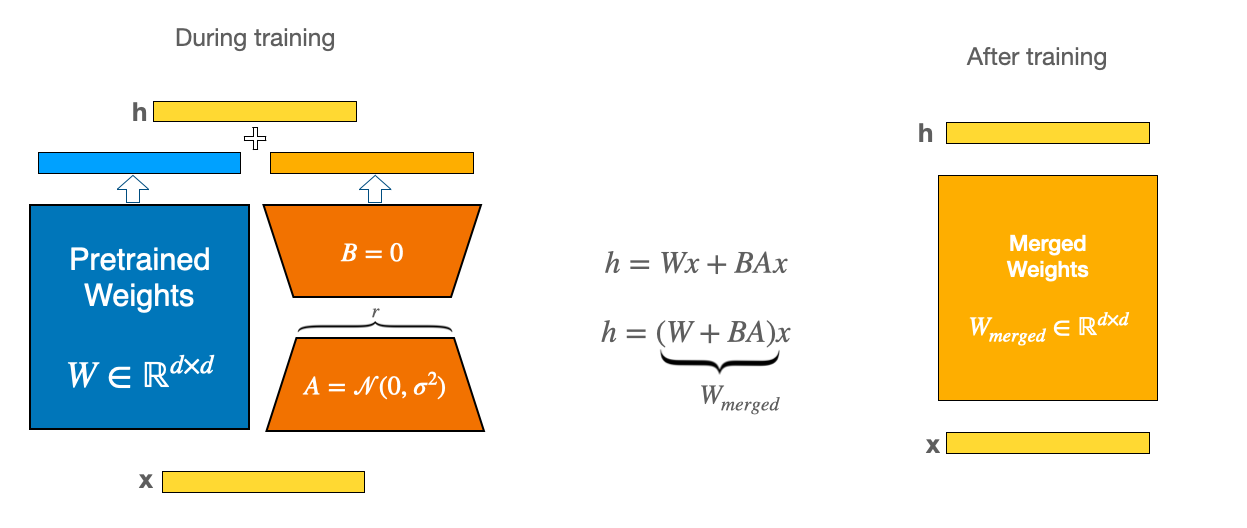

The pre-trained model is trained using LoRA method on custom dataset. The LoRA method is used to train the model with only trainable parameters.

|

| 44 |

|

| 45 |

+

The Vision Transformer (ViT) is a transformer encoder model (BERT-like) pretrained on a large collection of images in a supervised fashion, namely ImageNet-21k, at a resolution of 224x224 pixels. Next, the model was fine-tuned on ImageNet (also referred to as ILSVRC2012), a dataset comprising 1 million images and 1,000 classes, also at resolution 224x224.

|

| 46 |

|

| 47 |

+

Images are presented to the model as a sequence of fixed-size patches (resolution 16x16), which are linearly embedded. One also adds a [CLS] token to the beginning of a sequence to use it for classification tasks. One also adds absolute position embeddings before feeding the sequence to the layers of the Transformer encoder.

|

| 48 |

|

| 49 |

+

By pre-training the model, it learns an inner representation of images that can then be used to extract features useful for downstream tasks: if you have a dataset of labeled images for instance, you can train a standard classifier by placing a linear layer on top of the pre-trained encoder. One typically places a linear layer on top of the [CLS] token, as the last hidden state of this token can be seen as a representation of an entire image.

|

| 50 |

|

| 51 |

+

## What is LoRA

|

| 52 |

|

| 53 |

+

|

| 54 |

|

| 55 |

+

LoRA (Low-rank Optimization for Rapid Adaptation) is a parameter-efficient training method that utilizes low-rank decomposition to reduce the number of trainable parameters. Instead of updating the entire weight matrix, LoRA employs smaller matrices that adapt to new data while maintaining the original weight matrix.

|

| 56 |

|

| 57 |

+

To implement LoRA, we first freeze the pre-trained model weights and then insert trainable rank decomposition matrices into each layer of the transformer architecture. These matrices are much smaller than the original model weights and can capture the essential information for the adaptation. By multiplying the rank decomposition matrices with the frozen weights, we obtain a low-rank approximation of the adapted model weights. This way, we can reduce the number of trainable parameters by several orders of magnitude and also save GPU memory and inference time.

|

| 58 |

|

| 59 |

+

For example, let's suppose we have a pre-trained model with 100 million parameters and 50 layers, and we want to fine-tune the model. If we use LoRA, we can insert two rank decomposition matrices of size 100 x 10 and 10 x 100 into each layer of the model. By multiplying these matrices with the frozen weights, we can obtain a low-rank approximation of the adapted weights with only 2000 parameters per layer. This means that we can reduce the number of trainable parameters from 100 million to 2 million, which is a 50 times reduction. Moreover, we can also speed up the inference process by using the low-rank approximation instead of the original weights.

|

| 60 |

|

| 61 |

+

This approach offers several benefits:

|

| 62 |

|

| 63 |

+

1. Reduced Trainable Parameters: LoRA significantly reduces the number of trainable parameters, leading to faster training and reduced memory consumption.

|

| 64 |

+

2. Frozen Pre-trained Weights: The original weight matrix remains frozen, allowing it to be used as a foundation for multiple lightweight LoRA models. This facilitates efficient transfer learning and domain adaptation.

|

| 65 |

+

3. Compatibility with Other Parameter-Efficient Methods: LoRA can be seamlessly integrated with other parameter-efficient techniques, such as knowledge distillation and pruning, further enhancing model efficiency.

|

| 66 |

+

4. Comparable Performance: LoRA achieves performance comparable to fully fine-tuned models, demonstrating its effectiveness in preserving model accuracy despite reducing trainable parameters.

|

| 67 |

|

|

|

|

| 68 |

|

| 69 |

+

### Let's examine the LoraConfig Parameters:

|

| 70 |

+

- `r`: The rank of the update matrices, represented as an integer. Lower rank values result in smaller update matrices with fewer trainable parameters.

|

| 71 |

|

| 72 |

+

- `target_modules`: The modules (such as attention blocks) where the LoRA update matrices should be applied.

|

| 73 |

|

| 74 |

+

- `alpha`: The scaling factor for LoRA.

|

| 75 |

|

| 76 |

+

- `layers_pattern`: A pattern used to match layer names in `target_modules` if `layers_to_transform` is specified. By default, Peft model will use a common layer pattern (layers, h, blocks, etc.). This pattern can also be used for exotic and custom models.

|

| 77 |

|

| 78 |

+

- `rank_pattern`: A mapping from layer names or regular expression expressions to ranks that differ from the default rank specified by `r`.

|

| 79 |

|

| 80 |

+

- `alpha_pattern`: A mapping from layer names or regular expression expressions to alphas that differ from the default alpha specified by `lora_alpha`.

|

| 81 |

|

| 82 |

+

### Let's look at the components of the LoRA model:

|

| 83 |

|

| 84 |

+

- **lora.Linear**: LoRA adapts pre-trained models using a low-rank decomposition. It modifies the linear transformation layers (query, key, value) in the attention mechanism.

|

| 85 |

+

- **base_layer**: The original linear transformation.

|

| 86 |

+

- **lora_dropout**: Dropout applied to the LoRA parameters.

|

| 87 |

+

- **lora_A**: The matrix A in the low-rank decomposition.

|

| 88 |

+

- **lora_B**: The matrix B in the low-rank decomposition.

|

| 89 |

+

- **lora_embedding_A/B**: The learnable embeddings for LoRA.

|

| 90 |

|

| 91 |

+

## Intended uses & limitations

|

| 92 |

|

| 93 |

+

You can use the raw model for image classification. See the [model hub](https://huggingface.co/models?search=google/vit) to look for

|

| 94 |

+

fine-tuned versions on a task that interests you.

|

| 95 |

|

| 96 |

+

### How to use

|

| 97 |

|

|

|

|

| 98 |

|

| 99 |

+

```python

|

| 100 |

+

from transformers import ViTImageProcessor, ViTForImageClassification, AutoModelForImageClassification

|

| 101 |

+

from peft import PeftConfig, PeftModel

|

| 102 |

+

from PIL import Image

|

| 103 |

+

import requests

|

| 104 |

+

import torch

|

| 105 |

|

| 106 |

+

url = "https://huggingface.co/datasets/alanahmet/LoRA-pets-dataset/resolve/main/shiba_inu_136.jpg"

|

| 107 |

+

image = Image.open(requests.get(url, stream=True).raw)

|

| 108 |

|

| 109 |

+

processor = ViTImageProcessor.from_pretrained('google/vit-base-patch16-224')

|

| 110 |

+

repo_name = 'https://huggingface.co/md-vasim/vit-base-patch16-224-finetuned-lora-oxford-pets'

|

| 111 |

|

| 112 |

+

classes = ['chihuahua', 'newfoundland', 'english setter', 'Persian', 'yorkshire terrier', 'Maine Coon', 'boxer', 'leonberger', 'Birman', 'staffordshire bull terrier', 'Egyptian Mau', 'shiba inu', 'wheaten terrier', 'miniature pinscher', 'american pit bull terrier', 'Bombay', 'British Shorthair', 'german shorthaired', 'american bulldog', 'Abyssinian', 'great pyrenees', 'Siamese', 'Sphynx', 'english cocker spaniel', 'japanese chin', 'havanese', 'Russian Blue', 'saint bernard', 'samoyed', 'scottish terrier', 'keeshond', 'Bengal', 'Ragdoll', 'pomeranian', 'beagle', 'basset hound', 'pug']

|

| 113 |

|

| 114 |

+

label2id = {c:idx for idx,c in enumerate(classes)}

|

| 115 |

+

id2label = {idx:c for idx,c in enumerate(classes)}

|

| 116 |

|

| 117 |

+

config = PeftConfig.from_pretrained(repo_name)

|

| 118 |

+

model = AutoModelForImageClassification.from_pretrained(

|

| 119 |

+

config.base_model_name_or_path,

|

| 120 |

+

label2id=label2id,

|

| 121 |

+

id2label=id2label,

|

| 122 |

+

ignore_mismatched_sizes=True, # provide this in case you're planning to fine-tune an already fine-tuned checkpoint

|

| 123 |

+

)

|

| 124 |

+

# Load the Lora model

|

| 125 |

+

inference_model = PeftModel.from_pretrained(model, repo_name)

|

| 126 |

|

| 127 |

+

encoding = processor(image.convert("RGB"), return_tensors="pt")

|

| 128 |

+

with torch.no_grad():

|

| 129 |

+

outputs = inference_model(**encoding)

|

| 130 |

+

logits = outputs.logits

|

| 131 |

|

| 132 |

+

predicted_class_idx = logits.argmax(-1).item()

|

| 133 |

+

print("Predicted class:", inference_model.config.id2label[predicted_class_idx])

|

| 134 |

+

```

|

| 135 |

|

| 136 |

+

## Training Arguments

|

| 137 |

+

```python

|

| 138 |

+

batch_size = 128

|

| 139 |

|

| 140 |

+

args = TrainingArguments(

|

| 141 |

+

f"{model_checkpoint}-finetuned-lora-oxford-pets",

|

| 142 |

+

per_device_train_batch_size=batch_size,

|

| 143 |

+

learning_rate=5e-3,

|

| 144 |

+

num_train_epochs=5,

|

| 145 |

+

per_device_eval_batch_size=batch_size,

|

| 146 |

+

gradient_accumulation_steps=4,

|

| 147 |

+

logging_steps=10,

|

| 148 |

+

save_total_limit=2,

|

| 149 |

+

evaluation_strategy="epoch",

|

| 150 |

+

save_strategy="epoch",

|

| 151 |

+

metric_for_best_model="accuracy",

|

| 152 |

+

report_to='tensorboard',

|

| 153 |

+

fp16=True,

|

| 154 |

+

push_to_hub=True,

|

| 155 |

+

remove_unused_columns=False,

|

| 156 |

+

load_best_model_at_end=True,

|

| 157 |

+

)

|

| 158 |

+

```

|

| 159 |

|

| 160 |

+

For more code examples, we refer to the [documentation](https://huggingface.co/transformers/model_doc/vit.html#).

|

| 161 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|