Update README.md

Browse files

README.md

CHANGED

|

@@ -2,9 +2,56 @@

|

|

| 2 |

license: apache-2.0

|

| 3 |

datasets:

|

| 4 |

- Yirany/UniMM-Chat

|

|

|

|

| 5 |

language:

|

| 6 |

- en

|

| 7 |

library_name: transformers

|

| 8 |

---

|

| 9 |

|

| 10 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 2 |

license: apache-2.0

|

| 3 |

datasets:

|

| 4 |

- Yirany/UniMM-Chat

|

| 5 |

+

- HaoyeZhang/RLHF-V-Dataset

|

| 6 |

language:

|

| 7 |

- en

|

| 8 |

library_name: transformers

|

| 9 |

---

|

| 10 |

|

| 11 |

+

# Model Card for RLHF-V

|

| 12 |

+

|

| 13 |

+

[Project Page](https://rlhf-v.github.io/)|[GitHub ](https://github.com/RLHF-V/RLHF-V)|[Demo](http://120.92.209.146:8081/)|[Paper](https://arxiv.org/abs/2312.00849)

|

| 14 |

+

|

| 15 |

+

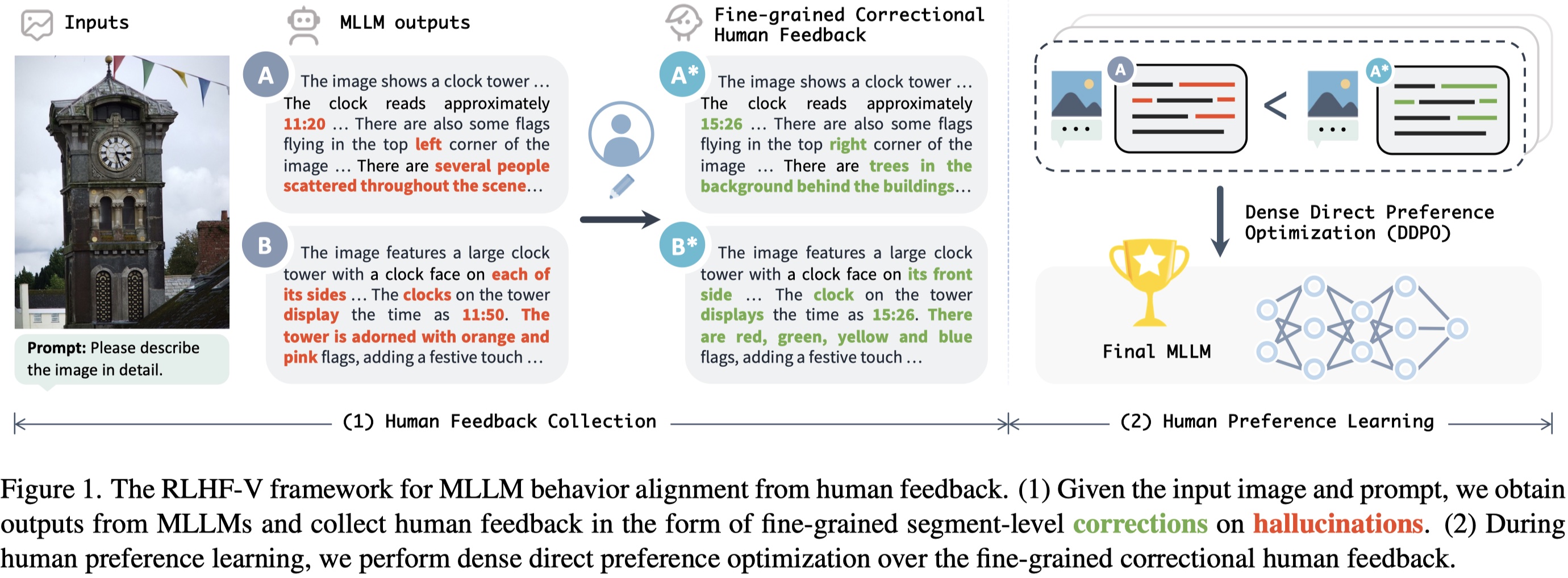

RLHF-V is an open-source multimodal large language model with the **lowest hallucination rate** on both long-form instructions and short-form questions.

|

| 16 |

+

|

| 17 |

+

RLHF-V is trained on [RLHF-V-Dataset](https://huggingface.co/datasets/HaoyeZhang/RLHF-V-Dataset), which contains **fine-grained segment-level human corrections** on diverse instructions. The base model is trained on [UniMM-Chat](https://huggingface.co/datasets/Yirany/UniMM-Chat), which is a high-quality knowledge-intensive SFT dataset. We introduce a new method **Dense Direct Preference Optimization (DDPO)** that can make better use of the fine-grained annotations.

|

| 18 |

+

|

| 19 |

+

For more details, please refer to our [paper](https://arxiv.org/abs/2312.00849).

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

## Model Details

|

| 24 |

+

|

| 25 |

+

### Model Description

|

| 26 |

+

- **Trained from model:** Based on Vicuna-13B

|

| 27 |

+

- **Trained on data:** [RLHF-V-Dataset](https://huggingface.co/datasets/HaoyeZhang/RLHF-V-Dataset)

|

| 28 |

+

|

| 29 |

+

### Model Sources

|

| 30 |

+

|

| 31 |

+

- **Project Page:** https://rlhf-v.github.io

|

| 32 |

+

- **GitHub Repository:** https://github.com/RLHF-V/RLHF-V

|

| 33 |

+

- **Demo:** http://120.92.209.146:8081

|

| 34 |

+

- **Paper:** https://arxiv.org/abs/2312.00849

|

| 35 |

+

|

| 36 |

+

## Performance

|

| 37 |

+

|

| 38 |

+

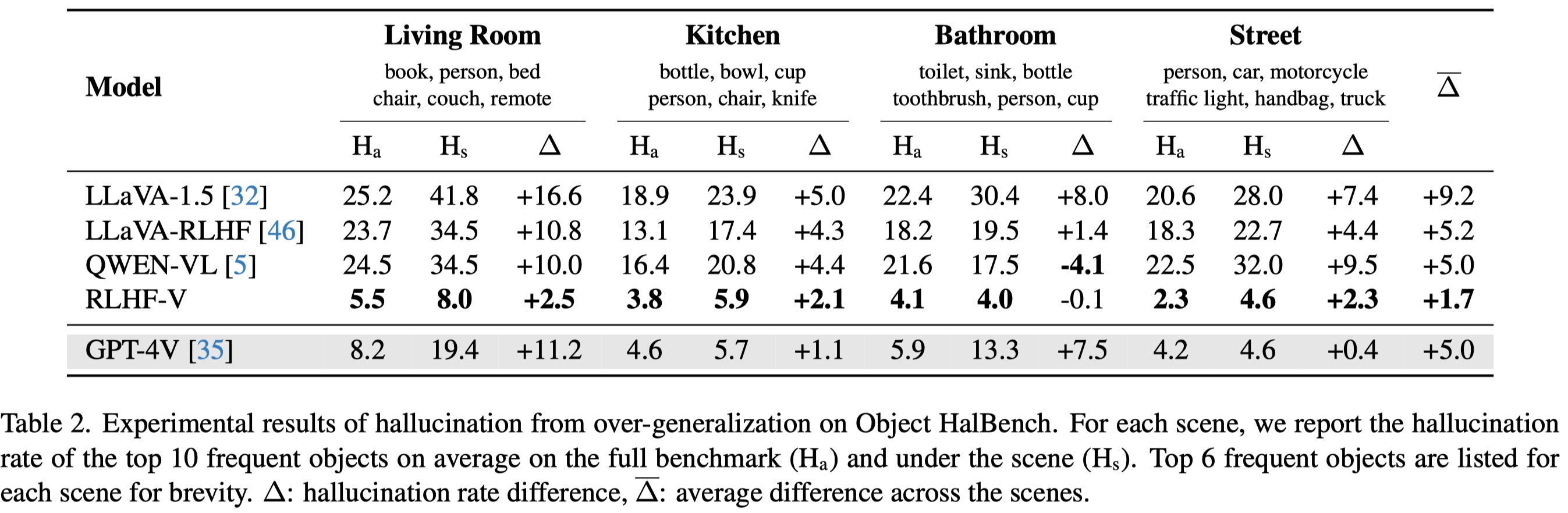

Low hallucination rate while being informative:

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

More resistant to over-generalization, even compared to GPT-4V:

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

## Citation

|

| 47 |

+

|

| 48 |

+

If you find RLHF-V is useful in your work, please cite it with:

|

| 49 |

+

|

| 50 |

+

```

|

| 51 |

+

@article{2023rlhf-v,

|

| 52 |

+

author = {Tianyu Yu and Yuan Yao and Haoye Zhang and Taiwen He and Yifeng Han and Ganqu Cui and Jinyi Hu and Zhiyuan Liu and Hai-Tao Zheng and Maosong Sun and Tat-Seng Chua},

|

| 53 |

+

title = {RLHF-V: Towards Trustworthy MLLMs via Behavior Alignment from Fine-grained Correctional Human Feedback},

|

| 54 |

+

journal = {arxiv},

|

| 55 |

+

year = {2023},

|

| 56 |

+

}

|

| 57 |

+

```

|