This repo contains a low-rank adapter for LLaMA-13b fit on the Stanford Alpaca dataset.

This version of the weights was trained on a dual RTX3090 system, powered by solar energy. Total training time was about 24 hours.

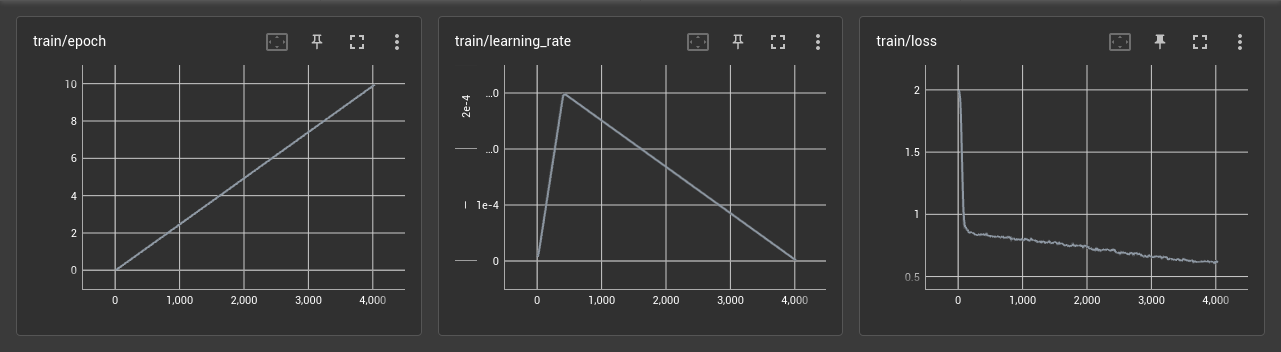

The following hyperparameters were used:

Epochs: 10

Batch size: 128

Cutoff length: 256

Learning rate: 3e-4

Lora r: 16

Lora alpha: 16

Lora target modules: q_proj, k_proj, v_proj, o_proj

That is:

OMP_NUM_THREADS=4 WORLD_SIZE=2 CUDA_VISIBLE_DEVICES=0,1 torchrun --nproc_per_node=2 --master_port=1234 finetune.py \

--base_model='decapoda-research/llama-13b-hf' \

--data_path="yahma/alpaca-cleaned' \

--num_epochs=10 \

--output_dir='./lora-alpaca-13b-256-qkvo' \

--lora_target_modules='[q_proj,k_proj,v_proj,o_proj]' \

--lora_r=16 \

--val_set_size=0 \

--micro_batch_size=32

LR warmup was tuned to fit the first epoch.

Instructions for running it can be found at https://github.com/tloen/alpaca-lora.