modelId

string | author

string | last_modified

timestamp[us, tz=UTC] | downloads

int64 | likes

int64 | library_name

string | tags

list | pipeline_tag

string | createdAt

timestamp[us, tz=UTC] | card

string |

|---|---|---|---|---|---|---|---|---|---|

stabilityai/stable-diffusion-2-1-base

|

stabilityai

| 2023-07-05T16:19:20Z | 863,939 | 647 |

diffusers

|

[

"diffusers",

"safetensors",

"stable-diffusion",

"text-to-image",

"arxiv:2112.10752",

"arxiv:2202.00512",

"arxiv:1910.09700",

"license:openrail++",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2022-12-06T17:25:36Z |

---

license: openrail++

tags:

- stable-diffusion

- text-to-image

---

# Stable Diffusion v2-1-base Model Card

This model card focuses on the model associated with the Stable Diffusion v2-1-base model.

This `stable-diffusion-2-1-base` model fine-tunes [stable-diffusion-2-base](https://huggingface.co/stabilityai/stable-diffusion-2-base) (`512-base-ema.ckpt`) with 220k extra steps taken, with `punsafe=0.98` on the same dataset.

- Use it with the [`stablediffusion`](https://github.com/Stability-AI/stablediffusion) repository: download the `v2-1_512-ema-pruned.ckpt` [here](https://huggingface.co/stabilityai/stable-diffusion-2-1-base/resolve/main/v2-1_512-ema-pruned.ckpt).

- Use it with 🧨 [`diffusers`](#examples)

## Model Details

- **Developed by:** Robin Rombach, Patrick Esser

- **Model type:** Diffusion-based text-to-image generation model

- **Language(s):** English

- **License:** [CreativeML Open RAIL++-M License](https://huggingface.co/stabilityai/stable-diffusion-2/blob/main/LICENSE-MODEL)

- **Model Description:** This is a model that can be used to generate and modify images based on text prompts. It is a [Latent Diffusion Model](https://arxiv.org/abs/2112.10752) that uses a fixed, pretrained text encoder ([OpenCLIP-ViT/H](https://github.com/mlfoundations/open_clip)).

- **Resources for more information:** [GitHub Repository](https://github.com/Stability-AI/).

- **Cite as:**

@InProceedings{Rombach_2022_CVPR,

author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn},

title = {High-Resolution Image Synthesis With Latent Diffusion Models},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022},

pages = {10684-10695}

}

## Examples

Using the [🤗's Diffusers library](https://github.com/huggingface/diffusers) to run Stable Diffusion 2 in a simple and efficient manner.

```bash

pip install diffusers transformers accelerate scipy safetensors

```

Running the pipeline (if you don't swap the scheduler it will run with the default PNDM/PLMS scheduler, in this example we are swapping it to EulerDiscreteScheduler):

```python

from diffusers import StableDiffusionPipeline, EulerDiscreteScheduler

import torch

model_id = "stabilityai/stable-diffusion-2-1-base"

scheduler = EulerDiscreteScheduler.from_pretrained(model_id, subfolder="scheduler")

pipe = StableDiffusionPipeline.from_pretrained(model_id, scheduler=scheduler, torch_dtype=torch.float16)

pipe = pipe.to("cuda")

prompt = "a photo of an astronaut riding a horse on mars"

image = pipe(prompt).images[0]

image.save("astronaut_rides_horse.png")

```

**Notes**:

- Despite not being a dependency, we highly recommend you to install [xformers](https://github.com/facebookresearch/xformers) for memory efficient attention (better performance)

- If you have low GPU RAM available, make sure to add a `pipe.enable_attention_slicing()` after sending it to `cuda` for less VRAM usage (to the cost of speed)

# Uses

## Direct Use

The model is intended for research purposes only. Possible research areas and tasks include

- Safe deployment of models which have the potential to generate harmful content.

- Probing and understanding the limitations and biases of generative models.

- Generation of artworks and use in design and other artistic processes.

- Applications in educational or creative tools.

- Research on generative models.

Excluded uses are described below.

### Misuse, Malicious Use, and Out-of-Scope Use

_Note: This section is originally taken from the [DALLE-MINI model card](https://huggingface.co/dalle-mini/dalle-mini), was used for Stable Diffusion v1, but applies in the same way to Stable Diffusion v2_.

The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. This includes generating images that people would foreseeably find disturbing, distressing, or offensive; or content that propagates historical or current stereotypes.

#### Out-of-Scope Use

The model was not trained to be factual or true representations of people or events, and therefore using the model to generate such content is out-of-scope for the abilities of this model.

#### Misuse and Malicious Use

Using the model to generate content that is cruel to individuals is a misuse of this model. This includes, but is not limited to:

- Generating demeaning, dehumanizing, or otherwise harmful representations of people or their environments, cultures, religions, etc.

- Intentionally promoting or propagating discriminatory content or harmful stereotypes.

- Impersonating individuals without their consent.

- Sexual content without consent of the people who might see it.

- Mis- and disinformation

- Representations of egregious violence and gore

- Sharing of copyrighted or licensed material in violation of its terms of use.

- Sharing content that is an alteration of copyrighted or licensed material in violation of its terms of use.

## Limitations and Bias

### Limitations

- The model does not achieve perfect photorealism

- The model cannot render legible text

- The model does not perform well on more difficult tasks which involve compositionality, such as rendering an image corresponding to “A red cube on top of a blue sphere”

- Faces and people in general may not be generated properly.

- The model was trained mainly with English captions and will not work as well in other languages.

- The autoencoding part of the model is lossy

- The model was trained on a subset of the large-scale dataset

[LAION-5B](https://laion.ai/blog/laion-5b/), which contains adult, violent and sexual content. To partially mitigate this, we have filtered the dataset using LAION's NFSW detector (see Training section).

### Bias

While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases.

Stable Diffusion vw was primarily trained on subsets of [LAION-2B(en)](https://laion.ai/blog/laion-5b/),

which consists of images that are limited to English descriptions.

Texts and images from communities and cultures that use other languages are likely to be insufficiently accounted for.

This affects the overall output of the model, as white and western cultures are often set as the default. Further, the

ability of the model to generate content with non-English prompts is significantly worse than with English-language prompts.

Stable Diffusion v2 mirrors and exacerbates biases to such a degree that viewer discretion must be advised irrespective of the input or its intent.

## Training

**Training Data**

The model developers used the following dataset for training the model:

- LAION-5B and subsets (details below). The training data is further filtered using LAION's NSFW detector, with a "p_unsafe" score of 0.1 (conservative). For more details, please refer to LAION-5B's [NeurIPS 2022](https://openreview.net/forum?id=M3Y74vmsMcY) paper and reviewer discussions on the topic.

**Training Procedure**

Stable Diffusion v2 is a latent diffusion model which combines an autoencoder with a diffusion model that is trained in the latent space of the autoencoder. During training,

- Images are encoded through an encoder, which turns images into latent representations. The autoencoder uses a relative downsampling factor of 8 and maps images of shape H x W x 3 to latents of shape H/f x W/f x 4

- Text prompts are encoded through the OpenCLIP-ViT/H text-encoder.

- The output of the text encoder is fed into the UNet backbone of the latent diffusion model via cross-attention.

- The loss is a reconstruction objective between the noise that was added to the latent and the prediction made by the UNet. We also use the so-called _v-objective_, see https://arxiv.org/abs/2202.00512.

We currently provide the following checkpoints, for various versions:

### Version 2.1

- `512-base-ema.ckpt`: Fine-tuned on `512-base-ema.ckpt` 2.0 with 220k extra steps taken, with `punsafe=0.98` on the same dataset.

- `768-v-ema.ckpt`: Resumed from `768-v-ema.ckpt` 2.0 with an additional 55k steps on the same dataset (`punsafe=0.1`), and then fine-tuned for another 155k extra steps with `punsafe=0.98`.

### Version 2.0

- `512-base-ema.ckpt`: 550k steps at resolution `256x256` on a subset of [LAION-5B](https://laion.ai/blog/laion-5b/) filtered for explicit pornographic material, using the [LAION-NSFW classifier](https://github.com/LAION-AI/CLIP-based-NSFW-Detector) with `punsafe=0.1` and an [aesthetic score](https://github.com/christophschuhmann/improved-aesthetic-predictor) >= `4.5`.

850k steps at resolution `512x512` on the same dataset with resolution `>= 512x512`.

- `768-v-ema.ckpt`: Resumed from `512-base-ema.ckpt` and trained for 150k steps using a [v-objective](https://arxiv.org/abs/2202.00512) on the same dataset. Resumed for another 140k steps on a `768x768` subset of our dataset.

- `512-depth-ema.ckpt`: Resumed from `512-base-ema.ckpt` and finetuned for 200k steps. Added an extra input channel to process the (relative) depth prediction produced by [MiDaS](https://github.com/isl-org/MiDaS) (`dpt_hybrid`) which is used as an additional conditioning.

The additional input channels of the U-Net which process this extra information were zero-initialized.

- `512-inpainting-ema.ckpt`: Resumed from `512-base-ema.ckpt` and trained for another 200k steps. Follows the mask-generation strategy presented in [LAMA](https://github.com/saic-mdal/lama) which, in combination with the latent VAE representations of the masked image, are used as an additional conditioning.

The additional input channels of the U-Net which process this extra information were zero-initialized. The same strategy was used to train the [1.5-inpainting checkpoint](https://github.com/saic-mdal/lama).

- `x4-upscaling-ema.ckpt`: Trained for 1.25M steps on a 10M subset of LAION containing images `>2048x2048`. The model was trained on crops of size `512x512` and is a text-guided [latent upscaling diffusion model](https://arxiv.org/abs/2112.10752).

In addition to the textual input, it receives a `noise_level` as an input parameter, which can be used to add noise to the low-resolution input according to a [predefined diffusion schedule](configs/stable-diffusion/x4-upscaling.yaml).

- **Hardware:** 32 x 8 x A100 GPUs

- **Optimizer:** AdamW

- **Gradient Accumulations**: 1

- **Batch:** 32 x 8 x 2 x 4 = 2048

- **Learning rate:** warmup to 0.0001 for 10,000 steps and then kept constant

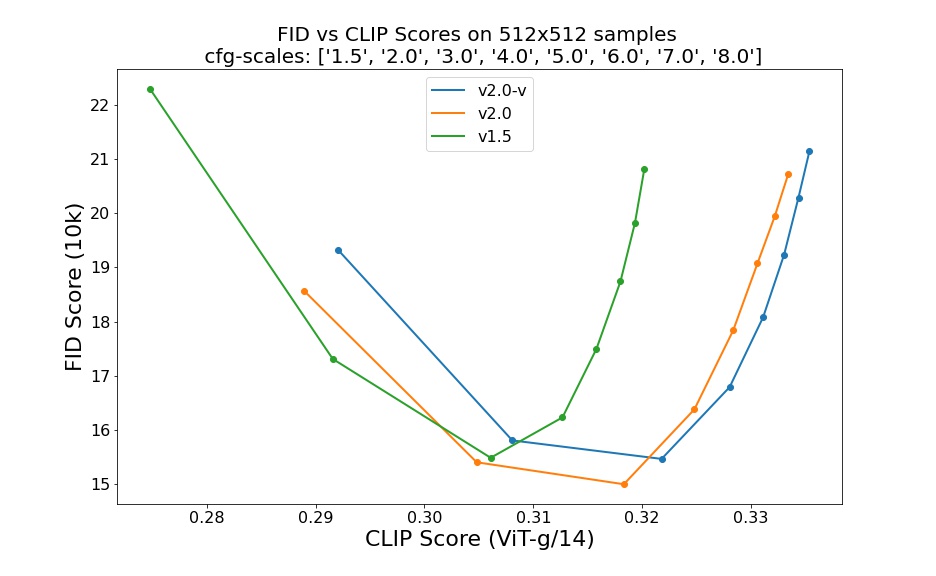

## Evaluation Results

Evaluations with different classifier-free guidance scales (1.5, 2.0, 3.0, 4.0,

5.0, 6.0, 7.0, 8.0) and 50 steps DDIM sampling steps show the relative improvements of the checkpoints:

Evaluated using 50 DDIM steps and 10000 random prompts from the COCO2017 validation set, evaluated at 512x512 resolution. Not optimized for FID scores.

## Environmental Impact

**Stable Diffusion v1** **Estimated Emissions**

Based on that information, we estimate the following CO2 emissions using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700). The hardware, runtime, cloud provider, and compute region were utilized to estimate the carbon impact.

- **Hardware Type:** A100 PCIe 40GB

- **Hours used:** 200000

- **Cloud Provider:** AWS

- **Compute Region:** US-east

- **Carbon Emitted (Power consumption x Time x Carbon produced based on location of power grid):** 15000 kg CO2 eq.

## Citation

@InProceedings{Rombach_2022_CVPR,

author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn},

title = {High-Resolution Image Synthesis With Latent Diffusion Models},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022},

pages = {10684-10695}

}

*This model card was written by: Robin Rombach, Patrick Esser and David Ha and is based on the [Stable Diffusion v1](https://github.com/CompVis/stable-diffusion/blob/main/Stable_Diffusion_v1_Model_Card.md) and [DALL-E Mini model card](https://huggingface.co/dalle-mini/dalle-mini).*

|

dkatsiros/ppo-LunarLander-v2

|

dkatsiros

| 2023-07-05T16:16:17Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-05T16:15:56Z |

---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: 258.74 +/- 16.94

name: mean_reward

verified: false

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

CeroShrijver/chinese-macbert-base-text-classification

|

CeroShrijver

| 2023-07-05T16:12:51Z | 117 | 0 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"bert",

"text-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-06-03T07:53:30Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: chinese-macbert-base-text-classification

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# chinese-macbert-base-text-classification

This model is a fine-tuned version of [hfl/chinese-macbert-base](https://huggingface.co/hfl/chinese-macbert-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6609

- Accuracy: 0.7844

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.5794 | 1.0 | 1009 | 0.4825 | 0.7900 |

| 0.3729 | 2.0 | 2018 | 0.5239 | 0.8043 |

| 0.3049 | 3.0 | 3027 | 0.6609 | 0.7844 |

### Framework versions

- Transformers 4.29.2

- Pytorch 2.0.1+cu117

- Datasets 2.12.0

- Tokenizers 0.13.3

|

Neko-Institute-of-Science/LLaMA-65B-4bit-32g

|

Neko-Institute-of-Science

| 2023-07-05T15:41:35Z | 5 | 10 |

transformers

|

[

"transformers",

"llama",

"text-generation",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-04-07T04:33:21Z |

I tried making groupsize 16 but that did not end well so I went with 32g. FYI I can run this with full context on my A6000.

```

65B (act-order true-sequential groupsize)

wikitext2 3.5319948196411133 (stock 16bit)

wikitext2 3.610668182373047 (32g)

wikitext2 3.650667667388916 (16g)

wikitext2 3.6660284996032715 (128)

ptb-new 7.66942024230957 (stock 16bit)

ptb-new 7.71506929397583 (32g)

ptb-new 7.762592792510986 (128)

ptb-new 7.829207897186279 (16g)

c4-new 5.8114824295043945 (stock 16bit)

c4-new 5.859227657318115 (32g)

c4-new 5.893154144287109 (128)

c4-new 5.929086208343506 (16g)

```

|

robsong3/distilbert-base-uncased-finetuned-cola

|

robsong3

| 2023-07-05T14:55:09Z | 61 | 0 |

transformers

|

[

"transformers",

"tf",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-07-05T13:16:55Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: robsong3/distilbert-base-uncased-finetuned-cola

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# robsong3/distilbert-base-uncased-finetuned-cola

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.1931

- Validation Loss: 0.5174

- Train Matthews Correlation: 0.5396

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': None, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 1602, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Train Matthews Correlation | Epoch |

|:----------:|:---------------:|:--------------------------:|:-----:|

| 0.5157 | 0.4443 | 0.5062 | 0 |

| 0.3214 | 0.4521 | 0.5370 | 1 |

| 0.1931 | 0.5174 | 0.5396 | 2 |

### Framework versions

- Transformers 4.30.2

- TensorFlow 2.12.0

- Datasets 2.13.1

- Tokenizers 0.13.3

|

sjdata/ast-finetuned-audioset-10-10-0.4593-finetuned-gtzan

|

sjdata

| 2023-07-05T14:31:24Z | 163 | 0 |

transformers

|

[

"transformers",

"pytorch",

"audio-spectrogram-transformer",

"audio-classification",

"generated_from_trainer",

"dataset:marsyas/gtzan",

"license:bsd-3-clause",

"endpoints_compatible",

"region:us"

] |

audio-classification

| 2023-07-05T14:00:35Z |

---

license: bsd-3-clause

tags:

- generated_from_trainer

datasets:

- marsyas/gtzan

metrics:

- accuracy

model-index:

- name: ast-finetuned-audioset-10-10-0.4593-finetuned-gtzan

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# ast-finetuned-audioset-10-10-0.4593-finetuned-gtzan

This model is a fine-tuned version of [MIT/ast-finetuned-audioset-10-10-0.4593](https://huggingface.co/MIT/ast-finetuned-audioset-10-10-0.4593) on the GTZAN dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6230

- Accuracy: 0.89

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 2.1198 | 1.0 | 450 | 1.8429 | 0.47 |

| 0.0005 | 2.0 | 900 | 1.6282 | 0.71 |

| 0.3129 | 3.0 | 1350 | 1.0553 | 0.73 |

| 0.0225 | 4.0 | 1800 | 0.9422 | 0.82 |

| 0.0025 | 5.0 | 2250 | 0.6008 | 0.85 |

| 0.0 | 6.0 | 2700 | 0.7194 | 0.86 |

| 0.0 | 7.0 | 3150 | 0.6268 | 0.89 |

| 0.0 | 8.0 | 3600 | 0.6372 | 0.89 |

| 0.0 | 9.0 | 4050 | 0.6167 | 0.89 |

| 0.0 | 10.0 | 4500 | 0.6230 | 0.89 |

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1+cu118

- Datasets 2.13.1

- Tokenizers 0.13.3

|

jliu596/q-FrozenLake-v1-4x4-noSlippery

|

jliu596

| 2023-07-05T14:20:19Z | 0 | 0 | null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-05T14:20:17Z |

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="jliu596/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

huangyuyang/chatglm2-6b-fp16.flm

|

huangyuyang

| 2023-07-05T12:51:41Z | 0 | 9 | null |

[

"license:apache-2.0",

"region:us"

] | null | 2023-07-05T12:11:27Z |

---

license: apache-2.0

---

fastllm model for chatglm-6b-fp16

Github address: https://github.com/ztxz16/fastllm

|

Babiputih/Sarahvil

|

Babiputih

| 2023-07-05T12:21:01Z | 0 | 0 | null |

[

"arxiv:1910.09700",

"license:creativeml-openrail-m",

"region:us"

] | null | 2023-07-05T12:10:53Z |

---

license: creativeml-openrail-m

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

This modelcard aims to be a base template for new models. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/modelcard_template.md?plain=1).

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

- **Developed by:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Data Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Data Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

luispintoc/taxi-v2

|

luispintoc

| 2023-07-05T12:03:57Z | 0 | 0 | null |

[

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-05T12:03:55Z |

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: taxi-v2

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

metrics:

- type: mean_reward

value: 7.56 +/- 2.71

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="luispintoc/taxi-v2", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

riccorl/blink-bert-large

|

riccorl

| 2023-07-05T11:57:22Z | 110 | 2 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"bert",

"feature-extraction",

"en",

"license:mit",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] |

feature-extraction

| 2023-04-26T08:56:04Z |

---

license: mit

language:

- en

library_name: transformers

---

# BLINK BERT-Large Model

This is a BERT-Large model finetuned on [BLINK](https://github.com/facebookresearch/BLINK)

|

bofenghuang/vigogne-falcon-7b-instruct

|

bofenghuang

| 2023-07-05T11:38:33Z | 25 | 1 |

transformers

|

[

"transformers",

"pytorch",

"RefinedWebModel",

"text-generation",

"LLM",

"custom_code",

"fr",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"region:us"

] |

text-generation

| 2023-07-05T09:51:56Z |

---

license: apache-2.0

language:

- fr

pipeline_tag: text-generation

library_name: transformers

tags:

- LLM

inference: false

---

<p align="center" width="100%">

<img src="https://huggingface.co/bofenghuang/vigogne-falcon-7b-instruct/resolve/main/vigogne_logo.png" alt="Vigogne" style="width: 40%; min-width: 300px; display: block; margin: auto;">

</p>

# Vigogne-Falcon-7B-Instruct: A French Instruction-following Falcon Model

Vigogne-Falcon-7B-Instruct is a [Falcon-7B](https://huggingface.co/tiiuae/falcon-7b) model fine-tuned to follow the French instructions.

For more information, please visit the Github repo: https://github.com/bofenghuang/vigogne

## Usage

```python

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, GenerationConfig

from vigogne.preprocess import generate_instruct_prompt

model_name_or_path = "bofenghuang/vigogne-falcon-7b-instruct"

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, padding_side="right", use_fast=False)

tokenizer.pad_token = tokenizer.eos_token

model = AutoModelForCausalLM.from_pretrained(

model_name_or_path,

torch_dtype=torch.float16,

device_map="auto",

trust_remote_code=True,

)

user_query = "Expliquez la différence entre DoS et phishing."

prompt = generate_instruct_prompt(user_query)

input_ids = tokenizer(prompt, return_tensors="pt")["input_ids"].to(model.device)

input_length = input_ids.shape[1]

generated_outputs = model.generate(

input_ids=input_ids,

generation_config=GenerationConfig(

temperature=0.1,

do_sample=True,

repetition_penalty=1.0,

max_new_tokens=512,

),

return_dict_in_generate=True,

pad_token_id=tokenizer.eos_token_id,

eos_token_id=tokenizer.eos_token_id,

)

generated_tokens = generated_outputs.sequences[0, input_length:]

generated_text = tokenizer.decode(generated_tokens, skip_special_tokens=True)

print(generated_text)

```

You can also infer this model by using the following Google Colab Notebook.

<a href="https://colab.research.google.com/github/bofenghuang/vigogne/blob/main/notebooks/infer_instruct.ipynb" target="_blank"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

## Limitations

Vigogne is still under development, and there are many limitations that have to be addressed. Please note that it is possible that the model generates harmful or biased content, incorrect information or generally unhelpful answers.

|

vergarajit/HuggyLlama

|

vergarajit

| 2023-07-05T11:32:32Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-06-27T00:08:30Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.4.0.dev0

|

NihalSrivastava/advertisement-description-generator

|

NihalSrivastava

| 2023-07-05T11:18:06Z | 137 | 1 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"gpt2",

"text-generation",

"en",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-04-06T05:39:06Z |

---

license: mit

language:

- en

library_name: transformers

widget:

- text: "<|BOS|>laptop, fast, gaming, great graphics, affordable <|SEP|> "

example_title: "Laptop"

- text: "<|BOS|> T-Shirt, Red color, comfortable <|SEP|> "

example_title: "T-Shirt"

- text: "<|BOS|> Game console, multiple game support, 4k graphics, extreme fast performace <|SEP|> "

example_title: "Game Console"

---

# Advertisement-Description-Generator gpt2 finetuned on product descriptions

## Model Details

This model generates a 1-2 line description of a product given the keywords associated with it. It is a fine-tuned version of GPT-2 model on a custom dataset consisting of

328 rows in the format: (keyword, text)

Example:

[Echo Dot,smart speaker,Alexa,improved sound,sleek design,home], Introducing the all-new Echo Dot - the smart speaker with Alexa. It has improved sound and a sleek design that fits anywhere in your home.

- **Developed by:** Nihal Srivastava

- **Model type:** Finetuned GPT-2

- **License:** MIT

- **Finetuned from model [optional]:** GPT-2

## Uses

##### Inital Imports of the model and setting up helper functions:

```python

from transformers import AutoTokenizer, AutoModelForCausalLM

def join_keywords(keywords, randomize=True):

N = len(keywords)

if randomize:

M = random.choice(range(N+1))

keywords = keywords[:M]

random.shuffle(keywords)

return ','.join(keywords)

SPECIAL_TOKENS = { "bos_token": "<|BOS|>",

"eos_token": "<|EOS|>",

"unk_token": "<|UNK|>",

"pad_token": "<|PAD|>",

"sep_token": "<|SEP|>"}

device = torch.device("cuda")

tokenizer = AutoTokenizer.from_pretrained("NihalSrivastava/advertisement-description-generator")

model = AutoModelForCausalLM.from_pretrained("NihalSrivastava/advertisement-description-generator").to(device)

keywords = ['laptop', 'fast', 'gaming', 'great graphics', 'affordable']

kw = join_keywords(keywords, randomize=False)

prompt = SPECIAL_TOKENS['bos_token'] + kw + SPECIAL_TOKENS['sep_token']

generated = torch.tensor(tokenizer.encode(prompt)).unsqueeze(0)

device = torch.device("cuda")

generated = generated.to(device)

model.eval();

```

##### To generate a single best description:

``` python

# Beam-search text generation:

sample_outputs = model.generate(generated,

do_sample=True,

max_length=768,

num_beams=5,

repetition_penalty=5.0,

early_stopping=True,

num_return_sequences=1

)

for i, sample_output in enumerate(sample_outputs):

text = tokenizer.decode(sample_output, skip_special_tokens=True)

a = len(','.join(keywords))

print("{}: {}\n\n".format(i+1, text[a:]))

```

Output:

> 1: The gaming laptop has a fast clock speed, high refresh rate display, and advanced connectivity options for unparalleled gaming performance.

##### To generate top 10 best descriptions:

``` python

# Top-p (nucleus) text generation (10 samples):

sample_outputs = model.generate(generated,

do_sample=True,

min_length=50,

max_length=MAXLEN,

top_k=30,

top_p=0.7,

temperature=0.9,

repetition_penalty=2.0,

num_return_sequences=10

)

for i, sample_output in enumerate(sample_outputs):

text = tokenizer.decode(sample_output, skip_special_tokens=True)

a = len(','.join(keywords))

print("{}: {}\n\n".format(i+1, text[a:]))

```

Output:

Will generate 10 best outouts of product description as above.

#### Training Hyperparameters

``` python

DEBUG = False

USE_APEX = True

APEX_OPT_LEVEL = 'O1'

MODEL = 'gpt2' #{gpt2, gpt2-medium, gpt2-large, gpt2-xl}

UNFREEZE_LAST_N = 6 #The last N layers to unfreeze for training

SPECIAL_TOKENS = { "bos_token": "<|BOS|>",

"eos_token": "<|EOS|>",

"unk_token": "<|UNK|>",

"pad_token": "<|PAD|>",

"sep_token": "<|SEP|>"}

MAXLEN = 768 #{768, 1024, 1280, 1600}

TRAIN_SIZE = 0.8

if USE_APEX:

TRAIN_BATCHSIZE = 4

BATCH_UPDATE = 16

else:

TRAIN_BATCHSIZE = 2

BATCH_UPDATE = 32

EPOCHS = 20

LR = 5e-4

EPS = 1e-8

WARMUP_STEPS = 1e2

SEED = 2020

EVALUATION_STRATEGY=epoch

SAVE_STRATEGY=epoch

```

## Model Card Contact

Email: [email protected] <br>

Github: https://github.com/Nihal-Srivastava05 <br>

LinkedIn: https://www.linkedin.com/in/nihal-srivastava-7708a71b7/ <br>

|

jordyvl/dit-rvl_maveriq_tobacco3482_2023-07-05

|

jordyvl

| 2023-07-05T10:59:00Z | 161 | 0 |

transformers

|

[

"transformers",

"pytorch",

"beit",

"image-classification",

"generated_from_trainer",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2023-07-05T10:04:59Z |

---

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: dit-rvl_maveriq_tobacco3482_2023-07-05

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# dit-rvl_maveriq_tobacco3482_2023-07-05

This model is a fine-tuned version of [microsoft/dit-base-finetuned-rvlcdip](https://huggingface.co/microsoft/dit-base-finetuned-rvlcdip) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4530

- Accuracy: 0.94

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 4

- seed: 42

- gradient_accumulation_steps: 16

- total_train_batch_size: 256

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 100

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| No log | 0.96 | 3 | 2.2927 | 0.01 |

| No log | 1.96 | 6 | 2.2632 | 0.08 |

| No log | 2.96 | 9 | 2.2334 | 0.18 |

| No log | 3.96 | 12 | 2.2025 | 0.195 |

| No log | 4.96 | 15 | 2.1686 | 0.235 |

| No log | 5.96 | 18 | 2.1274 | 0.325 |

| No log | 6.96 | 21 | 2.0784 | 0.385 |

| No log | 7.96 | 24 | 2.0284 | 0.465 |

| No log | 8.96 | 27 | 1.9750 | 0.55 |

| No log | 9.96 | 30 | 1.9206 | 0.585 |

| No log | 10.96 | 33 | 1.8683 | 0.61 |

| No log | 11.96 | 36 | 1.8164 | 0.65 |

| No log | 12.96 | 39 | 1.7660 | 0.735 |

| No log | 13.96 | 42 | 1.7195 | 0.765 |

| No log | 14.96 | 45 | 1.6761 | 0.815 |

| No log | 15.96 | 48 | 1.6336 | 0.83 |

| No log | 16.96 | 51 | 1.5918 | 0.835 |

| No log | 17.96 | 54 | 1.5511 | 0.835 |

| No log | 18.96 | 57 | 1.5101 | 0.84 |

| No log | 19.96 | 60 | 1.4699 | 0.85 |

| No log | 20.96 | 63 | 1.4307 | 0.855 |

| No log | 21.96 | 66 | 1.3925 | 0.865 |

| No log | 22.96 | 69 | 1.3534 | 0.865 |

| No log | 23.96 | 72 | 1.3164 | 0.885 |

| No log | 24.96 | 75 | 1.2825 | 0.885 |

| No log | 25.96 | 78 | 1.2458 | 0.88 |

| No log | 26.96 | 81 | 1.2091 | 0.88 |

| No log | 27.96 | 84 | 1.1762 | 0.89 |

| No log | 28.96 | 87 | 1.1446 | 0.885 |

| No log | 29.96 | 90 | 1.1126 | 0.9 |

| No log | 30.96 | 93 | 1.0840 | 0.905 |

| No log | 31.96 | 96 | 1.0549 | 0.9 |

| No log | 32.96 | 99 | 1.0247 | 0.91 |

| No log | 33.96 | 102 | 0.9962 | 0.925 |

| No log | 34.96 | 105 | 0.9685 | 0.93 |

| No log | 35.96 | 108 | 0.9447 | 0.93 |

| No log | 36.96 | 111 | 0.9217 | 0.93 |

| No log | 37.96 | 114 | 0.9007 | 0.93 |

| No log | 38.96 | 117 | 0.8778 | 0.935 |

| No log | 39.96 | 120 | 0.8551 | 0.935 |

| No log | 40.96 | 123 | 0.8325 | 0.93 |

| No log | 41.96 | 126 | 0.8129 | 0.93 |

| No log | 42.96 | 129 | 0.7970 | 0.93 |

| No log | 43.96 | 132 | 0.7810 | 0.93 |

| No log | 44.96 | 135 | 0.7609 | 0.935 |

| No log | 45.96 | 138 | 0.7441 | 0.935 |

| No log | 46.96 | 141 | 0.7313 | 0.935 |

| No log | 47.96 | 144 | 0.7184 | 0.935 |

| No log | 48.96 | 147 | 0.7044 | 0.93 |

| No log | 49.96 | 150 | 0.6902 | 0.93 |

| No log | 50.96 | 153 | 0.6773 | 0.935 |

| No log | 51.96 | 156 | 0.6666 | 0.935 |

| No log | 52.96 | 159 | 0.6554 | 0.935 |

| No log | 53.96 | 162 | 0.6446 | 0.935 |

| No log | 54.96 | 165 | 0.6308 | 0.94 |

| No log | 55.96 | 168 | 0.6194 | 0.94 |

| No log | 56.96 | 171 | 0.6098 | 0.94 |

| No log | 57.96 | 174 | 0.6021 | 0.94 |

| No log | 58.96 | 177 | 0.5922 | 0.935 |

| No log | 59.96 | 180 | 0.5820 | 0.94 |

| No log | 60.96 | 183 | 0.5735 | 0.94 |

| No log | 61.96 | 186 | 0.5632 | 0.94 |

| No log | 62.96 | 189 | 0.5559 | 0.94 |

| No log | 63.96 | 192 | 0.5494 | 0.94 |

| No log | 64.96 | 195 | 0.5430 | 0.94 |

| No log | 65.96 | 198 | 0.5370 | 0.935 |

| No log | 66.96 | 201 | 0.5320 | 0.935 |

| No log | 67.96 | 204 | 0.5278 | 0.935 |

| No log | 68.96 | 207 | 0.5228 | 0.935 |

| No log | 69.96 | 210 | 0.5166 | 0.935 |

| No log | 70.96 | 213 | 0.5117 | 0.935 |

| No log | 71.96 | 216 | 0.5076 | 0.935 |

| No log | 72.96 | 219 | 0.5029 | 0.94 |

| No log | 73.96 | 222 | 0.4985 | 0.94 |

| No log | 74.96 | 225 | 0.4945 | 0.94 |

| No log | 75.96 | 228 | 0.4904 | 0.94 |

| No log | 76.96 | 231 | 0.4865 | 0.94 |

| No log | 77.96 | 234 | 0.4833 | 0.94 |

| No log | 78.96 | 237 | 0.4804 | 0.94 |

| No log | 79.96 | 240 | 0.4779 | 0.94 |

| No log | 80.96 | 243 | 0.4757 | 0.94 |

| No log | 81.96 | 246 | 0.4738 | 0.94 |

| No log | 82.96 | 249 | 0.4719 | 0.935 |

| No log | 83.96 | 252 | 0.4701 | 0.935 |

| No log | 84.96 | 255 | 0.4684 | 0.935 |

| No log | 85.96 | 258 | 0.4669 | 0.935 |

| No log | 86.96 | 261 | 0.4653 | 0.935 |

| No log | 87.96 | 264 | 0.4637 | 0.935 |

| No log | 88.96 | 267 | 0.4620 | 0.935 |

| No log | 89.96 | 270 | 0.4602 | 0.935 |

| No log | 90.96 | 273 | 0.4586 | 0.94 |

| No log | 91.96 | 276 | 0.4572 | 0.94 |

| No log | 92.96 | 279 | 0.4562 | 0.94 |

| No log | 93.96 | 282 | 0.4553 | 0.94 |

| No log | 94.96 | 285 | 0.4546 | 0.94 |

| No log | 95.96 | 288 | 0.4540 | 0.94 |

| No log | 96.96 | 291 | 0.4535 | 0.94 |

| No log | 97.96 | 294 | 0.4532 | 0.94 |

| No log | 98.96 | 297 | 0.4530 | 0.94 |

| No log | 99.96 | 300 | 0.4530 | 0.94 |

### Framework versions

- Transformers 4.26.1

- Pytorch 1.13.1.post200

- Datasets 2.9.0

- Tokenizers 0.13.2

|

Htar/taxi-3

|

Htar

| 2023-07-05T10:10:20Z | 0 | 0 | null |

[

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-05T10:07:37Z |

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: taxi-3

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

metrics:

- type: mean_reward

value: 7.50 +/- 2.72

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="Htar/taxi-3", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

Jyshen/Chat_Suzumiya_GLM2LoRA

|

Jyshen

| 2023-07-05T09:39:57Z | 0 | 1 |

peft

|

[

"peft",

"region:us"

] | null | 2023-06-10T12:56:56Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.4.0.dev0

|

thirupathibandam/autotrain-phanik-gptneo125-self-72299138912

|

thirupathibandam

| 2023-07-05T07:39:28Z | 31 | 0 |

adapter-transformers

|

[

"adapter-transformers",

"autotrain",

"text-generation",

"dataset:thirupathibandam/autotrain-data-phanik-gptneo125-self",

"co2_eq_emissions",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-07-05T07:08:38Z |

---

tags:

- autotrain

- text-generation

widget:

- text: 'I love AutoTrain because '

datasets:

- thirupathibandam/autotrain-data-phanik-gptneo125-self

co2_eq_emissions:

emissions: 0.3379436679826976

library_name: adapter-transformers

---

# Model Trained Using AutoTrain

- Problem type: Text Generation

- CO2 Emissions (in grams): 0.3379

## Validation Metrics

loss: 1.8594619035720825

|

cerspense/zeroscope_v2_1111models

|

cerspense

| 2023-07-05T06:39:40Z | 0 | 24 | null |

[

"license:cc-by-nc-4.0",

"region:us"

] | null | 2023-07-03T23:09:54Z |

---

license: cc-by-nc-4.0

---

[example outputs](https://www.youtube.com/watch?v=HO3APT_0UA4) (courtesy of [dotsimulate](https://www.instagram.com/dotsimulate/))

# zeroscope_v2 1111 models

A collection of watermark-free Modelscope-based video models capable of generating high quality video at [448x256](https://huggingface.co/cerspense/zeroscope_v2_dark_30x448x256), [576x320](https://huggingface.co/cerspense/zeroscope_v2_576w) and [1024 x 576](https://huggingface.co/cerspense/zeroscope_v2_XL). These models were trained from the [original weights](https://huggingface.co/damo-vilab/modelscope-damo-text-to-video-synthesis) with offset noise using 9,923 clips and 29,769 tagged frames.<br />

This collection makes it easy to switch between models with the new dropdown menu in the 1111 extension.

### Using it with the 1111 text2video extension

Simply download the contents of this repo to 'stable-diffusion-webui\models\text2video'

Or, manually download the model folders you want, along with VQGAN_autoencoder.pth.

Thanks to [dotsimulate](https://www.instagram.com/dotsimulate/) for the config files.

Thanks to [camenduru](https://github.com/camenduru), [kabachuha](https://github.com/kabachuha), [ExponentialML](https://github.com/ExponentialML), [VANYA](https://twitter.com/veryVANYA), [polyware](https://twitter.com/polyware_ai), [tin2tin](https://github.com/tin2tin)<br />

|

dlabs-matic-leva/segformer-b0-finetuned-segments-sidewalk-2

|

dlabs-matic-leva

| 2023-07-05T05:54:12Z | 186 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"segformer",

"vision",

"image-segmentation",

"dataset:segments/sidewalk-semantic",

"endpoints_compatible",

"region:us"

] |

image-segmentation

| 2023-07-04T07:03:03Z |

---

tags:

- vision

- image-segmentation

datasets:

- segments/sidewalk-semantic

finetuned_from: nvidia/mit-b0

widget:

- src: >-

https://datasets-server.huggingface.co/assets/segments/sidewalk-semantic/--/segments--sidewalk-semantic-2/train/3/pixel_values/image.jpg

example_title: Sidewalk example

---

|

niansong1996/lever-gsm8k-codex

|

niansong1996

| 2023-07-05T05:48:36Z | 103 | 0 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"text-classification",

"dataset:gsm8k",

"arxiv:2302.08468",

"arxiv:1910.09700",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-07-03T03:42:52Z |

---

license: apache-2.0

datasets:

- gsm8k

metrics:

- accuracy

model-index:

- name: lever-gsm8k-codex

results:

- task:

type: code generation # Required. Example: automatic-speech-recognition

# name: {task_name} # Optional. Example: Speech Recognition

dataset:

type: gsm8k # Required. Example: common_voice. Use dataset id from https://hf.co/datasets

name: GSM8K (Math Reasoning) # Required. A pretty name for the dataset. Example: Common Voice (French)

# config: {dataset_config} # Optional. The name of the dataset configuration used in `load_dataset()`. Example: fr in `load_dataset("common_voice", "fr")`. See the `datasets` docs for more info: https://huggingface.co/docs/datasets/package_reference/loading_methods#datasets.load_dataset.name

# split: {dataset_split} # Optional. Example: test

# revision: {dataset_revision} # Optional. Example: 5503434ddd753f426f4b38109466949a1217c2bb

# args:

# {arg_0}: {value_0} # Optional. Additional arguments to `load_dataset()`. Example for wikipedia: language: en

# {arg_1}: {value_1} # Optional. Example for wikipedia: date: 20220301

metrics:

- type: accuracy # Required. Example: wer. Use metric id from https://hf.co/metrics

value: 84.5 # Required. Example: 20.90

# name: {metric_name} # Optional. Example: Test WER

# config: {metric_config} # Optional. The name of the metric configuration used in `load_metric()`. Example: bleurt-large-512 in `load_metric("bleurt", "bleurt-large-512")`. See the `datasets` docs for more info: https://huggingface.co/docs/datasets/v2.1.0/en/loading#load-configurations

# args:

# {arg_0}: {value_0} # Optional. The arguments passed during `Metric.compute()`. Example for `bleu`: max_order: 4

verified: false # Optional. If true, indicates that evaluation was generated by Hugging Face (vs. self-reported).

---

# LEVER (for Codex on GSM8K)

This is one of the models produced by the paper ["LEVER: Learning to Verify Language-to-Code Generation with Execution"](https://arxiv.org/abs/2302.08468).

**Authors:** [Ansong Ni](https://niansong1996.github.io), Srini Iyer, Dragomir Radev, Ves Stoyanov, Wen-tau Yih, Sida I. Wang*, Xi Victoria Lin*

**Note**: This specific model is for Codex on the [GSM8K](https://github.com/openai/grade-school-math) dataset, for the models pretrained on other datasets, please see:

* [lever-spider-codex](https://huggingface.co/niansong1996/lever-spider-codex)

* [lever-wikitq-codex](https://huggingface.co/niansong1996/lever-wikitq-codex)

* [lever-mbpp-codex](https://huggingface.co/niansong1996/lever-mbpp-codex)

# Model Details

## Model Description

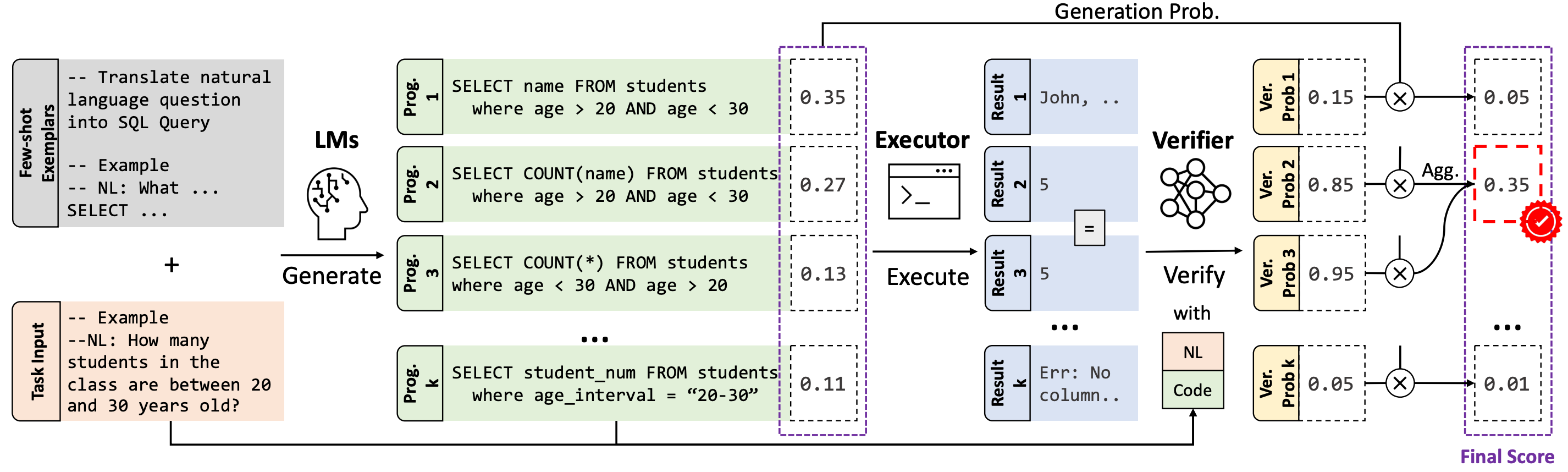

The advent of pre-trained code language models (Code LLMs) has led to significant progress in language-to-code generation. State-of-the-art approaches in this area combine CodeLM decoding with sample pruning and reranking using test cases or heuristics based on the execution results. However, it is challenging to obtain test cases for many real-world language-to-code applications, and heuristics cannot well capture the semantic features of the execution results, such as data type and value range, which often indicates the correctness of the program. In this work, we propose LEVER, a simple approach to improve language-to-code generation by learning to verify the generated programs with their execution results. Specifically, we train verifiers to determine whether a program sampled from the CodeLM is correct or not based on the natural language input, the program itself and its execution results. The sampled programs are reranked by combining the verification score with the CodeLM generation probability, and marginalizing over programs with the same execution results. On four datasets across the domains of table QA, math QA and basic Python programming, LEVER consistently improves over the base CodeLMs (4.6% to 10.9% with code-davinci-002) and achieves new state-of-the-art results on all of them.

- **Developed by:** Yale University and Meta AI

- **Shared by:** Ansong Ni

- **Model type:** Text Classification

- **Language(s) (NLP):** More information needed

- **License:** Apache-2.0

- **Parent Model:** RoBERTa-large

- **Resources for more information:**

- [Github Repo](https://github.com/niansong1996/lever)

- [Associated Paper](https://arxiv.org/abs/2302.08468)

# Uses

## Direct Use

This model is *not* intended to be directly used. LEVER is used to verify and rerank the programs generated by code LLMs (e.g., Codex). We recommend checking out our [Github Repo](https://github.com/niansong1996/lever) for more details.

## Downstream Use

LEVER is learned to verify and rerank the programs sampled from code LLMs for different tasks.

More specifically, for `lever-gsm8k-codex`, it was trained on the outputs of `code-davinci-002` on the [GSM8K](https://github.com/openai/grade-school-math) dataset. It can be used to rerank the SQL programs generated by Codex out-of-box.

Moreover, it may also be applied to other model's outputs on the GSM8K dataset, as studied in the [Original Paper](https://arxiv.org/abs/2302.08468).

## Out-of-Scope Use

The model should not be used to intentionally create hostile or alienating environments for people.

# Bias, Risks, and Limitations

Significant research has explored bias and fairness issues with language models (see, e.g., [Sheng et al. (2021)](https://aclanthology.org/2021.acl-long.330.pdf) and [Bender et al. (2021)](https://dl.acm.org/doi/pdf/10.1145/3442188.3445922)). Predictions generated by the model may include disturbing and harmful stereotypes across protected classes; identity characteristics; and sensitive, social, and occupational groups.

## Recommendations

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

# Training Details

## Training Data

The model is trained with the outputs from `code-davinci-002` model on the [GSM8K](https://github.com/openai/grade-school-math) dataset.

## Training Procedure

20 program samples are drawn from the Codex model on the training examples of the GSM8K dataset, those programs are later executed to obtain the execution information.

And for each example and its program sample, the natural language description and execution information are also part of the inputs that used to train the RoBERTa-based model to predict "yes" or "no" as the verification labels.

### Preprocessing

Please follow the instructions in the [Github Repo](https://github.com/niansong1996/lever) to reproduce the results.

### Speeds, Sizes, Times

More information needed

# Evaluation

## Testing Data, Factors & Metrics

### Testing Data

Dev and test set of the [GSM8K](https://github.com/openai/grade-school-math) dataset.

### Factors

More information needed

### Metrics

Execution accuracy (i.e., pass@1)

## Results

### GSM8K Math Reasoning via Python Code Generation

| | Exec. Acc. (Dev) | Exec. Acc. (Test) |

|-----------------|------------------|-------------------|

| Codex | 68.1 | 67.2 |

| Codex+LEVER | 84.1 | 84.5 |

# Model Examination

More information needed

# Environmental Impact

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** More information needed

- **Hours used:** More information needed

- **Cloud Provider:** More information needed

- **Compute Region:** More information needed

- **Carbon Emitted:** More information needed

# Technical Specifications [optional]

## Model Architecture and Objective

`lever-gsm8k-codex` is based on RoBERTa-large.

## Compute Infrastructure

More information needed

### Hardware

More information needed

### Software

More information needed.

# Citation

**BibTeX:**

```bibtex

@inproceedings{ni2023lever,

title={Lever: Learning to verify language-to-code generation with execution},

author={Ni, Ansong and Iyer, Srini and Radev, Dragomir and Stoyanov, Ves and Yih, Wen-tau and Wang, Sida I and Lin, Xi Victoria},

booktitle={Proceedings of the 40th International Conference on Machine Learning (ICML'23)},

year={2023}

}

```

# Glossary [optional]

More information needed

# More Information [optional]

More information needed

# Model Card Author and Contact

Ansong Ni, contact info on [personal website](https://niansong1996.github.io)

# How to Get Started with the Model

This model is *not* intended to be directly used, please follow the instructions in the [Github Repo](https://github.com/niansong1996/lever).

|

YIMMYCRUZ/distilroberta-base-mrpc-glue

|

YIMMYCRUZ

| 2023-07-05T02:08:22Z | 120 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"roberta",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-06-30T04:06:39Z |

---

license: apache-2.0

tags:

- text-classification

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

- f1

widget:

- text: ["Yucaipa owned Dominick 's before selling the chain to Safeway in 1998 for $ 2.5 billion.",

"Yucaipa bought Dominick's in 1995 for $ 693 million and sold it to Safeway for $ 1.8 billion in 1998."]

example_title: Not Equivalent

- text: ["Revenue in the first quarter of the year dropped 15 percent from the same period a year earlier.",

"With the scandal hanging over Stewart's company revenue the first quarter of the year dropped 15 percent from the same period a year earlier."]

example_title: Equivalent

model-index:

- name: distilroberta-base-mrpc-glue

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: mrpc

split: validation

args: mrpc

metrics:

- name: Accuracy

type: accuracy

value: 0.8088235294117647

- name: F1

type: f1

value: 0.8682432432432433

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilroberta-base-mrpc-glue

This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on the glue and the mrpc datasets.

It achieves the following results on the evaluation set:

- Loss: 0.6224

- Accuracy: 0.8088

- F1: 0.8682

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.508 | 1.09 | 500 | 0.6224 | 0.8088 | 0.8682 |

| 0.3303 | 2.18 | 1000 | 0.6322 | 0.8554 | 0.8963 |

### Framework versions

- Transformers 4.28.0

- Pytorch 2.0.1+cu118

- Datasets 2.13.1

- Tokenizers 0.13.3

|

eclec/announcementClassfication

|

eclec

| 2023-07-05T00:49:35Z | 22 | 1 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-07-04T16:44:48Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: announcementClassfication

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# announcementClassfication

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5613

- Accuracy: 0.85

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 4.430934731021352e-05

- train_batch_size: 16

- eval_batch_size: 8

- seed: 1

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| No log | 1.0 | 15 | 0.6120 | 0.6667 |

| No log | 2.0 | 30 | 0.5613 | 0.85 |

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1+cu118

- Datasets 2.13.1

- Tokenizers 0.13.3

|

digiplay/RMHF_2.5D_v2

|

digiplay

| 2023-07-05T00:31:26Z | 316 | 2 |

diffusers

|

[

"diffusers",

"safetensors",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-07-04T23:57:21Z |

---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

Model info :

https://civitai.com/models/101518/rmhf

Sample image I made :

Original Author's DEMO image and prompt :

cat ears, pink hair, heterochromia, red eye, blue eye, blue sky, ocean, sea, seaside, beach, water, white clouds, angel wings, angel halo, feather wings, multiple wings, large wings, halo, glowing halo, energy wings, glowing wings, angel, light particles, dappled sunlight, bright, glowing eyes, unity cg, 8k wallpaper, amazing, ultra-detailed illustration

|

GabrielFerreira/ppo-HuggyTest

|

GabrielFerreira

| 2023-07-04T23:30:24Z | 10 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"Huggy",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Huggy",

"region:us"

] |

reinforcement-learning

| 2023-06-28T03:39:57Z |

---

library_name: ml-agents

tags:

- Huggy

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Huggy

---

THIS MODEL IS JUST FOR STUDY AND DOES NOT HAVE GOOD PERFORMANCE.

# **ppo** Agent playing **Huggy**

This is a trained model of a **ppo** agent playing **Huggy**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: GabrielFerreira/ppo-HuggyPRO

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

tatsu-lab/alpaca-farm-ppo-human-wdiff

|

tatsu-lab

| 2023-07-04T23:05:54Z | 24 | 1 |

transformers

|

[

"transformers",

"pytorch",

"llama",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-05-24T07:38:35Z |

Please see https://github.com/tatsu-lab/alpaca_farm#downloading-pre-tuned-alpacafarm-models for details on this model.

|

Huggingfly/dqn-SpaceInvadersNoFrameskip-v4

|

Huggingfly

| 2023-07-04T20:26:00Z | 1 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"SpaceInvadersNoFrameskip-v4",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-04T20:25:25Z |

---

library_name: stable-baselines3

tags:

- SpaceInvadersNoFrameskip-v4

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: DQN

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: SpaceInvadersNoFrameskip-v4

type: SpaceInvadersNoFrameskip-v4

metrics:

- type: mean_reward

value: 566.50 +/- 172.35

name: mean_reward

verified: false

---

# **DQN** Agent playing **SpaceInvadersNoFrameskip-v4**

This is a trained model of a **DQN** agent playing **SpaceInvadersNoFrameskip-v4**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3)

and the [RL Zoo](https://github.com/DLR-RM/rl-baselines3-zoo).

The RL Zoo is a training framework for Stable Baselines3

reinforcement learning agents,

with hyperparameter optimization and pre-trained agents included.

## Usage (with SB3 RL Zoo)

RL Zoo: https://github.com/DLR-RM/rl-baselines3-zoo<br/>

SB3: https://github.com/DLR-RM/stable-baselines3<br/>

SB3 Contrib: https://github.com/Stable-Baselines-Team/stable-baselines3-contrib

Install the RL Zoo (with SB3 and SB3-Contrib):

```bash

pip install rl_zoo3

```

```

# Download model and save it into the logs/ folder

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga Huggingfly -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

If you installed the RL Zoo3 via pip (`pip install rl_zoo3`), from anywhere you can do:

```

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga Huggingfly -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

## Training (with the RL Zoo)

```

python -m rl_zoo3.train --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

# Upload the model and generate video (when possible)

python -m rl_zoo3.push_to_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/ -orga Huggingfly

```

## Hyperparameters

```python

OrderedDict([('batch_size', 32),

('buffer_size', 100000),

('env_wrapper',

['stable_baselines3.common.atari_wrappers.AtariWrapper']),

('exploration_final_eps', 0.01),

('exploration_fraction', 0.1),

('frame_stack', 4),

('gradient_steps', 1),

('learning_rate', 0.0001),

('learning_starts', 100000),

('n_timesteps', 1000000.0),

('optimize_memory_usage', False),

('policy', 'CnnPolicy'),

('target_update_interval', 1000),

('train_freq', 4),

('normalize', False)])

```

# Environment Arguments

```python

{'render_mode': 'rgb_array'}

```

|

nolanaatama/jcwrldrvcv2crp300pchsmlk

|

nolanaatama

| 2023-07-04T20:08:40Z | 0 | 0 | null |

[

"license:creativeml-openrail-m",

"region:us"

] | null | 2023-07-04T20:04:26Z |

---

license: creativeml-openrail-m

---

|

Adoley/covid-tweets-sentiment-analysis-distilbert-model

|

Adoley

| 2023-07-04T19:50:48Z | 124 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"safetensors",

"distilbert",

"text-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-05-11T19:35:51Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: covid-tweets-sentiment-analysis-distilbert-model

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# covid-tweets-sentiment-analysis-distilbert-model

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5979

- Rmse: 0.6680

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 42

- gradient_accumulation_steps: 16

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rmse |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 0.7464 | 2.0 | 500 | 0.5979 | 0.6680 |

| 0.4318 | 4.0 | 1000 | 0.6374 | 0.6327 |

| 0.1694 | 6.0 | 1500 | 0.9439 | 0.6311 |

| 0.072 | 8.0 | 2000 | 1.1471 | 0.6556 |

| 0.0388 | 10.0 | 2500 | 1.2217 | 0.6437 |

### Framework versions

- Transformers 4.29.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

rohanbalkondekar/adept-skunk

|

rohanbalkondekar

| 2023-07-04T19:06:11Z | 10 | 0 |

transformers

|

[

"transformers",

"pytorch",

"llama",

"text-generation",

"gpt",

"llm",

"large language model",

"h2o-llmstudio",

"en",

"autotrain_compatible",

"text-generation-inference",

"region:us"

] |

text-generation

| 2023-07-04T18:59:30Z |

---

language:

- en

library_name: transformers

tags:

- gpt

- llm

- large language model

- h2o-llmstudio

inference: false

thumbnail: https://h2o.ai/etc.clientlibs/h2o/clientlibs/clientlib-site/resources/images/favicon.ico

---

# Model Card

## Summary

This model was trained using [H2O LLM Studio](https://github.com/h2oai/h2o-llmstudio).

- Base model: [lmsys/vicuna-7b-v1.3](https://huggingface.co/lmsys/vicuna-7b-v1.3)

## Usage